7 Ways to Operationalize NIST AI RMF in Your Organization

Use NIST AI RMF implementation to set ownership, inventory AI use, apply controls, and build a review cadence your leaders can trust.

You're being pushed to use AI, but pressure is not a control. If ownership is fuzzy, approvals are informal, and board questions keep getting harder, your risk grows faster than your value.

That's why NIST AI RMF implementation matters. It gives you a way to turn AI risk into governance, process, and repeatable habits, not a policy file nobody uses.

In practice, operationalize means this: you move from ideas to day-to-day decisions. You define who owns what, what gets reviewed, what gets tested, and what leaders see on a schedule. The seven steps below make that real.

Start by naming an AI owner and setting decision rights

Most AI programs don't fail because the models are weak. They fail because nobody owns the tradeoffs. One team wants speed. Another wants control. A vendor adds AI features. Then nobody knows who can approve, pause, or escalate.

The Govern function in the NIST AI RMF starts here. You need one accountable executive for AI risk, even if that person coordinates across legal, security, data, compliance, and business teams. In a mid-market company, that may not be a full-time AI officer. It may be a COO, CIO, CISO, GC, or another leader with enough authority to settle conflicts.

If you don't have that capacity today, outside cybersecurity strategy advisor for CEOs support can help you set ownership, priorities, and reporting without waiting for a full-time hire.

Choose one accountable leader, then define who advises and who approves

Keep the model simple. One person is accountable. Other teams advise, review, or approve based on the decision.

A light RACI-style map works well. Use it for four things first: new AI use case approval, model or prompt changes, vendor review, and incident escalation. You don't need a large committee for every low-risk tool. You do need a clear path for who decides.

For example, a business leader can sponsor a use case. Security, legal, and data teams can review it. The accountable executive can approve or reject it. If the use case touches regulated data or customer decisions, require a higher level of sign-off.

What matters is not the chart. What matters is that people can act without guessing.

Set board and executive reporting lines before a problem forces them

You also need reporting lines before something goes wrong. If you wait for an incident, you will brief under pressure and with weak facts.

Your executive team should see a regular summary of top AI use cases, their risk tier, open exceptions, third-party exposure, and material changes since the last review. Your board doesn't need model detail. It needs a clear view of where AI affects customers, employees, data, operations, and trust.

If leaders can't explain who owns AI risk, they don't control it yet.

That visibility is the first sign your NIST AI RMF implementation is becoming operational, not theoretical.

Create an AI inventory so you know what is in use

You can't manage what you can't see. That sounds obvious, yet many organizations still track only approved AI projects. Meanwhile, embedded AI features spread through the business without review.

This is where the Map function matters. Your job is to identify AI use, then classify it by purpose, data sensitivity, and business impact. That includes homegrown models, vendor tools, and software with AI turned on by default.

Find AI already running in the business, not just the projects you approved

Start with what people are already using. Ask business units, procurement, IT, security, and vendor management for a current view. Then compare that list to identity logs, spend data, and core platforms.

You'll often find more than expected. Common examples include copilots in productivity suites, website chatbots, workflow automation tools, analytics platforms, HR screening tools, support systems, and SaaS products that added AI quietly in a recent release.

This is also where shadow AI shows up. Employees paste data into public tools because they want speed. Teams test new features before policy catches up. Vendors switch on AI features because the contract allows it. If you don't inventory those uses, your control model starts with blind spots.

Rank use cases by impact on people, data, operations, and trust

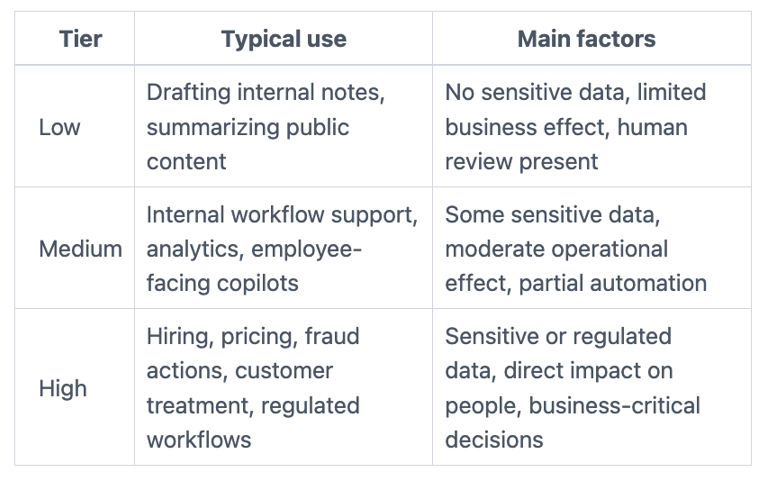

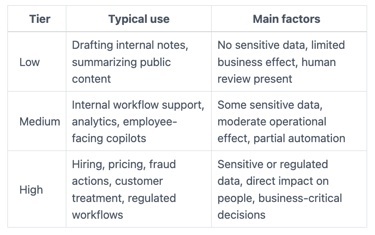

After you find the tools, tier them. A simple low, medium, high model is enough for most organizations.

This quick view helps frame the tiers:

Use practical criteria. Ask whether the AI affects customers, employees, regulated data, business continuity, or automated decisions. Also ask what happens if the tool is wrong, biased, unavailable, or misused.

The point is not perfect scoring. The point is separating low-risk experiments from high-impact uses that need deeper review.

Set minimum controls for every AI use case, then raise the bar for high-risk uses

Once you have inventory and tiers, set a control floor. Every AI use case should meet a minimum standard. Then higher-risk uses should face deeper review, stronger testing, and tighter oversight.

This is where the Measure and Manage functions become practical. You are not trying to block AI. You are trying to make AI adoption safer, more repeatable, and easier to defend when leadership asks why a tool was approved.

That same logic matters at the board level. If your directors need clearer oversight, cybersecurity governance for boards can help frame what they should review and what management should own.

Use a baseline checklist for privacy, security, bias, and accuracy

Keep the baseline short enough that teams will use it. For every AI use case, confirm six basics: what data goes in, what data comes out, who can access it, how results are reviewed, what gets logged, and what vendor terms apply.

For low-risk tools, that may be enough. For higher-risk systems, add a deeper path. Review training or input data quality, test for harmful bias, check output accuracy, confirm retention terms, and document known limits.

Don't let the checklist turn into theater. If nobody can explain the control in plain English, it probably won't hold up in practice.

Require human oversight where AI outputs could change important decisions

Some uses need a person in the loop, every time. Hiring decisions, pricing changes, fraud actions, safety-related outputs, legal exposure, and regulated workflows should not run on AI output alone.

Human oversight is not a vague idea. Define who reviews the output, what they must check, and when they can override the tool. Also define what counts as an exception.

If a model recommends, a person should still decide. If a tool flags, a person should still validate. That line protects people, and it protects your organization.

Test AI in the real world, not just in demos

A vendor demo is not proof. Lab performance is not proof either. Your NIST AI RMF implementation only becomes credible when you test AI in the context where your people will use it.

That means real prompts, real workflows, real users, and real failure conditions. You need measurable performance, not broad claims.

Check for accuracy, edge cases, and failure patterns that matter to your business

Start with business-relevant tests. If the tool writes summaries, check whether it drops key facts. If it helps with decisions, test whether it sounds confident when it is wrong. If it touches external content, check for harmful outputs or prompt injection exposure.

Also look for uneven behavior across user groups, business units, or data types. A model that performs well in one context may fail badly in another.

Vendor claims can guide your testing, but they can't replace it. Your risk sits in your environment, not theirs.

Decide what happens when the tool is wrong or unavailable

You also need a fallback plan. If the tool fails, who gets alerted? If output quality drops, who pauses use? If the vendor has an outage, what process takes over?

Write those answers before rollout. Then train the teams who rely on the tool. A bad output should trigger review. A repeated failure should trigger escalation. In some cases, the right move is simple: stop using the tool until the issue is fixed.

That is not overreaction. That is operational discipline.

Build an operating rhythm for review, exceptions, and updates

AI governance does not stick through a one-time launch. It sticks when review becomes part of your normal management rhythm.

Most organizations don't need an elaborate cadence. A monthly operating review and a quarterly leadership review will cover a lot of ground. The point is to inspect changes, not admire a policy.

If you want practical templates for that kind of executive cadence, CISO resources and templates can help you structure reporting and decision rights.

Review new use cases, model changes, and vendor updates on a fixed schedule

AI tools change quickly. Vendors add features. Models get retrained. Integrations expand. A tool you approved six months ago may carry a different risk today.

So review new use cases, material changes, exceptions, and incidents on a fixed schedule. Don't rely on informal updates. Require teams to surface changes that alter data use, decision impact, or customer exposure.

That discipline reduces surprise. It also gives leaders a current view, not a stale one.

Track a small set of metrics leaders can actually use

Keep metrics tight. Too many dashboards create noise.

A useful report often includes only five items: number of AI use cases by tier, exceptions granted, incidents or near misses, review completion rate, and major changes since the last report. Trends matter more than raw counts. Actions matter more than color coding.

If a metric doesn't change a decision, drop it. If a trend suggests rising risk, assign an owner and a date. That's how governance becomes inspectable execution.

Successful NIST AI RMF implementation is not about having more slides. It's about having a rhythm leaders can trust.

You don't need to make AI governance perfect on day one. You do need to make it visible, owned, and repeatable.

Start with the seven basics: ownership, decision rights, inventory, tiering, baseline controls, real-world testing, and review cadence. Those steps turn the NIST AI RMF from a framework on paper into a way your organization actually works.

Pick one step to tighten this month. Then assign an owner, set the review path, and put it on a calendar. That's how unclear AI risk becomes a managed business decision.

Providing plain-English technology oversight to help Boards and CEOs lead with confidence and make defensible risk decisions.

© 2026. All rights reserved.

Navigation

Free Resources

Contact

Stay ahead of your next board agenda

Sign up for Reports & Learnings From the Boardroom. Plain-English AI and cyber governance insights, biweekly. No pitch.

No spam. Unsubscribe anytime. · Or download the Director's AI Question Pack — 25 questions free