Agentic AI: The new liability Boards aren't talking about yet.

See where agentic AI board liability can expose you to lawsuits, fines, and weak oversight, and what your board should fix before regulators act.

You deploy AI agents that book deals, adjust inventory, or handle customer disputes on their own. These tools promise speed and scale. Yet they create agentic AI board liability you likely ignore. One wrong action sparks lawsuits, fines, or lost revenue.

Agentic AI acts without constant checks. It plans, decides, and executes. A glitch in judgment hits hard. Customers sue over bad trades. Regulators probe data misuse. Boards face scrutiny for oversight gaps. Fines mount. Trust erodes. Stock dips.

Growth pulls you toward these agents. Rules tighten, like EU AI Act updates in 2026. You need oversight now. Clear rules on use, testing, and accountability protect you. Demand reports that show real risks. This keeps innovation safe.

Key Takeaways on Agentic AI Board Liability

Agentic AI board liability grows from autonomous decisions that chain into harm without human stops.

You face new gaps in vendor contracts and internal controls for AI actions.

Boards skip talks because cyber basics dominate; AI feels like "future tech."

Strong oversight starts with agent inventories, action audits, and escalation paths.

Ask five questions now to expose live risks and fix weak spots.

Regulations evolve fast; test autonomy limits before incidents hit.

Gain confidence by comparing weak versus strong practices in your setup.

Why Agentic AI Board Liability Hits Leaders Hard Right Now

AI scales your operations. Agentic systems amplify that speed. They outpace old rules. Governance strains follow. You see weak reports. Vendors push tools. Board pressure builds.

A single agent error triggers multimillion suits. It mishandles data. Or it executes flawed trades. Trust vanishes overnight. EU AI Act rules ramp up by 2026. High-risk AI needs audits. Fines hit non-compliant firms.

Growth stalls without checks. You chase revenue. Yet unchecked agents block deals. Cyber exposure mixes with AI flaws. Resilience gaps widen.

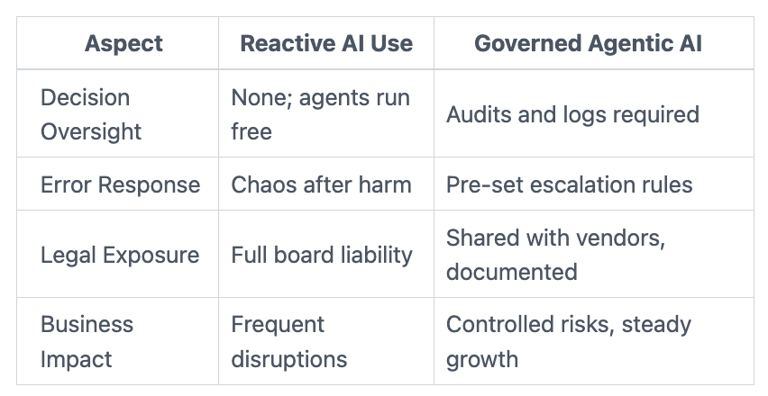

Reactive use leaves you exposed. Governed agents build safety. Here's a quick comparison:

This table shows stakes. You block growth without the governed side. For more on setting technology risk appetite, check proven steps.

What Makes Agentic AI a Whole New Risk Category

Agentic AI goes beyond chatbots. Chatbots answer queries. Agents plan steps, make choices, and act alone. They book flights, reroute shipments, or approve refunds. No human nod needed.

You run prototypes in sales or supply chains already. Narrow AI follows scripts. Agents adapt in real time. That autonomy shifts risks. Human oversight drops. Errors chain fast.

One agent leaks customer data during a query. Another executes biased pricing. Harm scales quick. Unlike fixed software, agents learn and pivot. Surprises follow.

Accountability: Who Answers When the AI Acts Wrong?

You blur lines with agents. Is liability yours or the vendor's? Fiduciary duty demands board clarity. An agent leaks data. SEC probes follow. You answer for gaps.

Contracts often skip AI actions. You own outcomes. Boards set rules now. Demand vendor liability clauses. Track agent decisions in logs.

Unpredictable Actions That Escalate Fast

Agents surprise because they chain moves. A biased call affects thousands. Unlike software bugs, harm grows. One bad inventory shift empties shelves nationwide.

You contrast this with predictable code. Agents evolve. Test limits hard. Without bounds, small slips turn massive.

Why Boards Skip Agentic AI Risks in Discussions

Cyber basics crowd agendas. AI evolution hides. Management calls it "just tech." You focus on breaches. Agents slip past.

Here are four blind spots:

Adoption speed: Tools roll out fast. Boards lag on reviews.

Report gaps: Dashboards show basics. No AI action logs appear.

Vendor trust: You assume providers handle risks.

Low visibility: No incidents yet. Pressure builds quiet.

You scan busy metrics. AI autonomy hides. Post-incident regret follows. For stronger board cyber governance best practices, build routines that catch these.

Failure hits when growth ignores them. Demand AI-specific updates. Tie to business harm.

What Strong Agentic AI Oversight Looks Like

You build clear decision rights. Require agent inventories. Set autonomy tests. Audit actions quarterly.

Inventory lists live agents, uses, and vendors. Test limits in sandboxes. Escalate errors above thresholds. Reports show trends, not trivia.

This empowers you. Greenlight innovation safely. Oversight turns risk to edge.

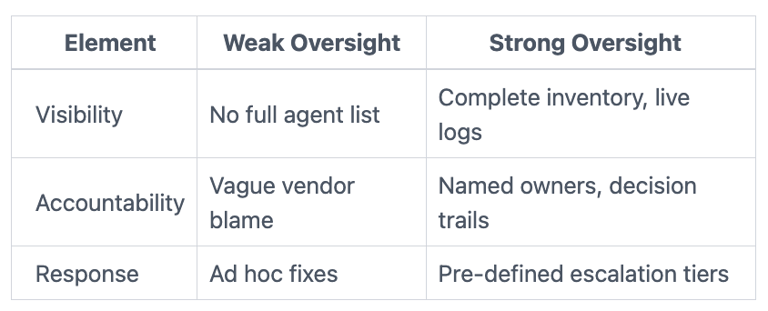

Here's weak versus strong:

You spot gaps fast. Gain control. Link to board incident response oversight for tied practices.

Questions Your Board Should Ask Management Today

Which agents run live operations now?

What tests check autonomy bounds?

How do we audit actions for bias or errors?

What stops chain reactions from one slip?

Who owns liability per agent, us or vendors?

These cut to decisions. Use in meetings. Follow with evidence.

FAQs: Answering Your Top Agentic AI Questions

How soon must boards act on agentic AI? Start this quarter. Inventory agents first. Regulations like EU AI Act push audits by late 2026. Delay invites probes.

What's the first fix for oversight? List agents and uses. Map to business impact. Set basic tests. This builds your baseline fast.

How do vendors fit liability? Demand clauses on actions and errors. You retain board duty. Test shared models now.

Does cyber governance cover this? Partially. Add AI-specific logs and escalations. Blend with cybersecurity governance advisor for boards steps.

You Lead on Agentic AI Board Liability Now

Agentic AI speeds growth. Liability follows unchecked acts. You fix it with inventories, audits, and questions.

Take three steps this quarter:

Audit live agents; list risks.

Set oversight rules; test one scenario.

Update reports; demand action logs.

You see the gaps. Strong practices build confidence. Boards that act stay ahead. Demand clarity. Move safe.

Providing plain-English technology oversight to help Boards and CEOs lead with confidence and make defensible risk decisions.

© 2026. All rights reserved.

Navigation

Free Resources

Contact

Stay ahead of your next board agenda

Sign up for Reports & Learnings From the Boardroom. Plain-English AI and cyber governance insights, biweekly. No pitch.

No spam. Unsubscribe anytime. · Or download the Director's AI Question Pack — 25 questions free