Audit Committee Cybersecurity Questions for Metrics, KRIs, and Reporting

Use audit committee cybersecurity questions to sharpen cyber metrics, KRIs, and reporting, so you can spot risk shifts and press for better decisions.

You may get a full cyber packet every quarter and still leave the meeting unsure what changed. That is the core problem. Many reports show activity, yet they don't help you judge whether risk is rising, controls are improving, or management is escalating the right issues.

If you're an audit committee chair, director, or executive, you don't need more charts. You need reporting that supports oversight and leads to decisions. A good starting point is a stronger cybersecurity governance framework for boards, because better questions usually come before better reporting.

The most useful audit committee cybersecurity questions are simple, direct, and tied to business impact. Start there, then press for metrics, KRIs, and reporting that earn their place.

Start with the purpose of cyber reporting before you ask for more metrics

Before you ask for another dashboard, decide what the report is meant to do. Cyber reporting should support oversight, show change over time, highlight decisions, and surface issues that need escalation. If it does not do those four things, it is not helping you govern.

Too many committees ask for more data when the real problem is weaker framing. That creates thicker decks and thinner judgment. You do not need a long wish list of charts. You need a short set of reports that help you see movement, ownership, and choices.

If a metric doesn't support a decision, it's probably noise.

Good reporting also creates continuity. It lets you compare this quarter with last quarter, and this year with last year. As a result, you can tell whether management is reducing exposure or only describing work.

What decision should each metric help you make?

Ask management a plain question: what decision is this metric supposed to support? That one question changes the meeting.

A metric may support a funding decision. It may justify a priority change. It may show why an exception should expire, not roll forward. It may trigger escalation. However, if no one can link the metric to a real oversight choice, you are looking at trivia.

This test is hard in the right way. It forces management to separate useful signals from habit. It also keeps the committee from drowning in operational detail that belongs with management, not directors.

What changed since the last meeting, and why does it matter now?

A static number rarely helps. Trend does. Movement does. Context does.

So ask what improved, what slipped, and what has stayed stuck across reporting cycles. Then ask why that movement matters now. Does it affect financial reporting, operations, legal exposure, customer trust, or business continuity? If not, the issue may not belong at the committee level.

This is where many reports fall short. They show current status, but not direction. They show completion, but not impact. Your job is to pull the discussion back to business effect, because activity alone does not reduce risk.

Ask for KRIs that show risk movement early, not after the damage is done

A key risk indicator, or KRI, is an early warning sign. It tells you risk may be moving the wrong way before the loss shows up. That is different from an operational metric, which usually tells you what the team did.

For example, patch counts can show effort. They do not always show exposure. On the other hand, the number of critical vulnerabilities past due on key revenue systems can warn you that risk is building. One shows work. The other shows a rising chance of harm.

That distinction matters for audit committees. You should not settle for technical activity counts when the real goal is earlier visibility into material business exposure.

Are your KRIs tied to business risk, or just security team workload?

Weak KRIs often measure workload with little context. Raw ticket volume is a common example. So are total alerts, total scans, or total patches applied. Those numbers may matter to the team, but they do not tell you enough about enterprise risk.

Stronger KRIs connect exposure to business effect. You may need board thresholds for cyber incidents when current reporting stays technical and never reaches oversight language.

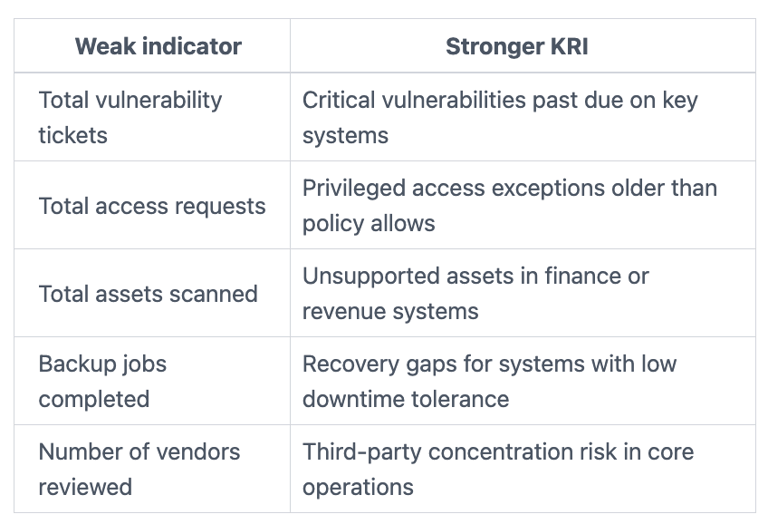

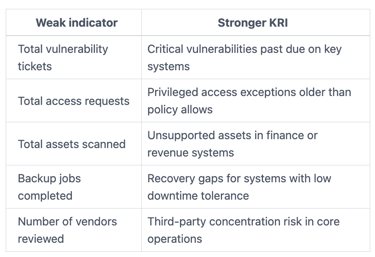

This quick comparison helps:

The takeaway is simple, your KRIs should point to material exposure, not team busyness.

What thresholds trigger escalation to management, the audit committee, or the full board?

A KRI without a threshold is like a fire alarm with no volume. You may see the signal, but no one knows when to act.

So ask who set the threshold, when it was last updated, and what happens when it is breached. Ask who owns the response. Then ask a harder question, if the same threshold keeps getting breached, is the problem poor execution or poor control design?

Clear thresholds protect both management and the committee. They reduce debate in the moment. They also make escalation more defensible, because the trigger is agreed in advance, not invented under pressure.

Use audit committee cybersecurity questions that test report quality, not just report content

A report can look polished and still mislead you. That is why your audit committee cybersecurity questions should test the quality of the reporting itself, not only the data inside it.

Bad reporting creates false comfort. It can hide blind spots, blur scope, and overstate progress. In other words, a strong-looking deck can still weaken oversight if the data is incomplete, stale, or one-sided.

Credibility matters here. Your committee should know what it can rely on, what is estimated, and what sits outside view.

Can management explain the source, scope, and limits of the data?

Ask where the data comes from. Ask how often it refreshes. Ask what parts of the environment are excluded.

Those questions often expose the real gap. Mergers, shadow IT, newly acquired business units, and vendor-managed systems can all create blind spots. So can manual data pulls and inconsistent definitions.

If management cannot explain the source and limits of the numbers, you should treat the report as directional, not complete. That does not make it useless. It does mean you need sharper caveats and follow-up. For a broader view on credible board reporting, these CISO insights for executives and boards can help frame what good looks like.

Does the report show exceptions, misses, and open decisions, or only good news?

Balanced reporting builds trust. Sanitized reporting breaks it.

You should expect to see missed deadlines, policy exceptions, overdue fixes, unresolved ownership, and decisions that still need committee input. Those items are not a sign of failure. They are a sign that management is being honest about risk and execution.

On the other hand, if every slide is green, every date is met, and every trend points up, you should press harder. Real programs have friction. Real governance records it. Real oversight acts on it.

Focus the discussion on the questions every audit committee should ask each quarter

Quarterly discussions work best when the question set is short and stable. You do not need a 40-question script. You need a focused set that tests usefulness, warning value, and accountability.

If your current pack does not support that discussion, you may need board-ready cybersecurity metrics for CEOs or outside help to reconnect cyber reporting to business decisions.

Questions that test whether cyber metrics are useful

Use these prompts to pressure-test the dashboard, not expand it:

Which three metrics best show whether risk is going up or down?

What metric did you remove this quarter, and why?

Which metric looks stable but hides a problem underneath?

What assumptions sit behind these numbers?

Where do you need committee support to improve performance?

These questions force discipline. They ask management to curate, explain, and decide. They also reveal whether the reporting system is learning over time, or simply repeating itself.

Questions that test whether KRIs will give you enough warning

Early warning only works if it arrives before the business impact lands. So ask:

Which KRIs are closest to threshold breach today?

What trend worries management most right now?

If that threshold is crossed, what is the likely business effect?

How fast would you know it happened?

What preventive action is already underway?

This set keeps the committee out of deep technical detail while keeping the focus on timing, consequence, and response. That is where oversight adds value.

Questions that test whether reporting supports accountability

Every serious cyber issue should have an owner, a decision path, and a review date. Ask:

Who owns each top risk, by name and role?

What decision is pending from management or the committee?

Where are we accepting risk by choice, not by accident?

What has not improved after repeated reporting cycles?

What would you want this committee to know sooner next time?

When you ask these questions every quarter, the tone changes. Reporting becomes less about status and more about action. If you need help tightening ownership and cadence at the board level, cybersecurity board advisor support can help strengthen that operating rhythm.

Strong oversight does not come from more pages. It comes from sharper signals, cleaner thresholds, and visible accountability.

You do not need more cyber data. You need better judgment aids. That means fewer vanity metrics, better KRIs, and reporting that shows movement, ownership, and decisions.

Start with purpose. Then test whether the metrics support action, whether the KRIs warn early enough, and whether the reporting is complete enough to trust. If those three things improve, your oversight improves with them.

If you want to strengthen your committee's approach, review these resources for boards and executives and use your next meeting to ask for clearer signals, clearer thresholds, and a clearer escalation path.