Cybersecurity Program Assessment Mistakes That Cost Companies Millions

Stop wasting money on a cybersecurity program assessment that ends as a report. Fix scope, evidence, metrics, owners, and recovery tests in 90-day days.

If your cybersecurity program assessment ends with a polished report and no improvement in risk management, you didn't buy clarity. You bought paper. That's a hard truth, especially when the next ransomware event, vendor breach, or data exposure turns into downtime, legal costs, and customer churn.

A good assessment should help you make confident decisions. It should tell you what can realistically go wrong, how bad it could get, and what you can do next. It should also show proof, not opinions, that bolsters your security posture by confirming your controls work when you need them most.

The most expensive mistakes are predictable. They usually come from a bad scope, weak evidence, noisy metrics, unclear ownership, and untested readiness. If you fix those five areas, you stop wasting money and start reducing loss.

Key takeaways and best practices you can use before your next assessment

Tie scope to business goals and your top loss scenarios.

Demand evidence of security controls performance, not policy statements.

Measure outcomes (time to detect, contain, recover), not activity.

Name owners for every finding, with deadlines you can track.

Test incident decisions and recovery, don't assume they'll work.

Rank "crown jewel" systems, then assess those first.

Treat key vendors as part of your risk boundary.

Turn the report into a 90-day execution plan, fast.

Mistake 1: Treating the assessment like a compliance check instead of a business risk review

Compliance has value, but it's not the finish line. When your team treats a cybersecurity program risk assessment like an audit drill, they optimize for passing audits against industry standards. As a result, policies look complete, controls look mapped, and exceptions get politely documented. Meanwhile, real paths to material loss stay open.

Here's the common pattern. You see strong documentation for regulatory compliance, but weak operational reality. You have "MFA required" in policy, yet admins still use shared accounts. You have a backup standard, yet no one has proven restores on the systems that run revenue.

Picture a ransomware event. Attackers land in a single endpoint, then move to identity, then encrypt file shares and virtual servers. On paper, you had training, backups, and an incident plan. In practice, you lose five days of operations, pay for emergency forensics, face contract penalties, and watch customers move to competitors. Insurance renewal gets ugly too, because your evidence wasn't there.

If you want a simple mindset shift, anchor on moving from compliance to confidence, because confidence comes from tested capability, not checked boxes.

If the assessment can't explain your biggest business risks in plain language, it won't change your outcomes.

How to reframe scope around your biggest loss scenarios

To better align governance risk and compliance, start by scoping like you would for safety or financial controls. Keep it practical:

First, perform a gap analysis by listing your crown jewels (key information security assets like data sets, systems, and services you can't lose). Next, map your top five business processes that depend on technology (order to cash, payroll, care delivery, shipping, manufacturing, billing). Then define three to five realistic threat scenarios that would cause material pain, such as ransomware, vendor compromise, insider misuse, or cloud misconfiguration.

Now assess controls against those scenarios. Ask, "Would this control stop it, limit it, or speed recovery?" You can align to cybersecurity frameworks such as NIST CSF, ISO 27001, or FISMA without turning the work into a framework debate. The goal is to connect controls to loss prevention.

What boards and CEOs should ask to keep it real

You don't need technical questions, you need decision questions. These prompts keep the conversation grounded:

What internal controls would stop ransomware from halting revenue for five days?

Which two systems, if down, would trigger customer contract penalties?

Where are we relying on a vendor's security without verifying it?

When did we last prove we can restore our top three systems?

What's our fastest path to a material disclosure, and who decides it?

Where are we knowingly accepting risk, and when does that expire?

If you want more board-ready prompts following a risk-based approach, use these audit committee cyber risk oversight questions to pressure test ownership, evidence, and follow-through.

Mistake 2: Measuring activity and tools, not outcomes and exposure

Busy dashboards can create calm in the wrong way. You'll see counts of patched devices, completed trainings, blocked attacks, and open tickets. Those numbers may be real, yet they don't answer the business question you care about: "Are we advancing security program maturity to become less likely to suffer a costly event?"

Activity metrics are outputs. They describe motion, such as penetration testing completions. Outcome metrics describe protection and recovery. That difference matters because it drives budget, priorities, and risk acceptance.

For example, "98 percent patch compliance" can hide a fatal gap if crown jewel systems lag for 30 days. "All employees trained" means little if phishing reporting rates are low and privileged access is loose. Even "vulnerability scanning" completion or "threats blocked" can mislead, since high block counts often mean attackers are scanning you, not that you're winning.

Bad metrics lead to bad funding decisions. You keep paying for more tools because the dashboard looks "busy." Meanwhile, you underfund basics like identity hardening, logging coverage, and recovery testing. Then an incident hits, and you discover you can't detect it quickly, can't contain it cleanly, and can't restore within business tolerance.

To make metrics useful, focus on what executives can act on and your maturity level. A helpful framing is in The Hidden Value of Cyber Metrics Executives Actually Understand, which pushes metrics toward decisions, not noise.

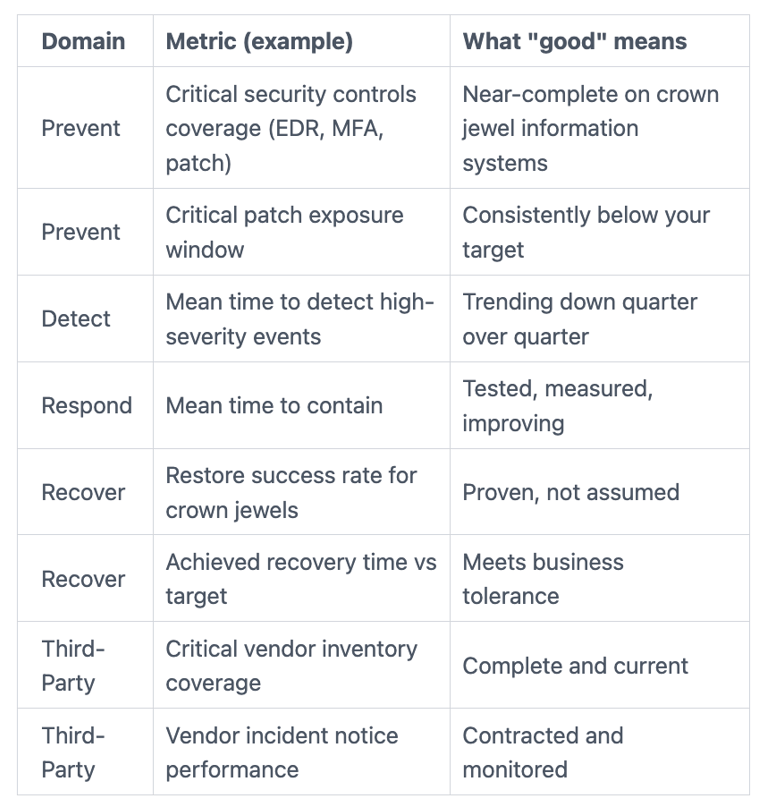

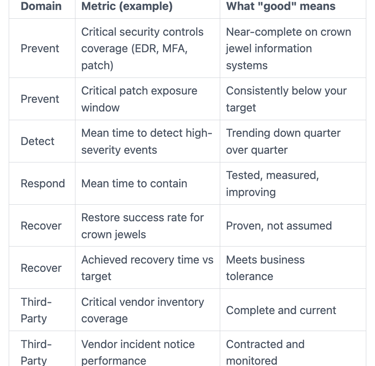

A simple scorecard that shows risk reduction without heavy math

A one-page scorecard works when it stays small, trend-based, and addresses operational risk. Here's a clean starting point you can review monthly.

Start with what you can measure reliably, then improve it over 90 days. The direction matters as much as the number.

Red flags that your metrics are telling the wrong story

You can't see recovery time for crown jewel systems.

You don't track coverage by criticality, only totals.

You report "training completion," but not reporting behavior.

You can't show internal control failures and repeat failures.

You have no trend lines, only point-in-time snapshots.

You can't tie metrics to top risk scenarios.

You measure alerts, but not time to contain.

You lack metrics from security assessments.

Mistake 3: Producing a report with no owners, no deadlines, and no proof of readiness

A cybersecurity program assessment fails most often after the final meeting. The report lands, everyone agrees it's "important," and nothing changes. Findings from the gap analysis get parked in backlogs, or spread across teams with unclear decision rights. Six months later, the same gaps appear again, usually right before an audit, a renewal, or a deal.

This is where costs compound. Repeated findings waste internal time. Unclear ownership delays fixes that reduce exposure. When an incident happens, you pay more because containment is slower and recovery is messy. Even without an incident, deals can stall when buyers ask for proof you can't produce. Cyber insurance can also get more expensive when you can't show tested security controls.

Most of this comes down to governance. Who can accept risk, who must fix it, and how you verify closure. Boards can't run the work, but they can require a cadence that kills assumptions. If you want a strong model for that, read board oversight of incident response readiness, because readiness is where plans often collapse.

Turn findings into a 90 day plan leaders can inspect

You don't need a complex program plan to start. You need a visible plan.

A strong 90-day remediation roadmap takes a risk-based approach and includes: the top 10 risks, an accountable owner (role, not a committee), a due date, a cost range, key dependencies, and the proof you'll use to confirm closure of technical findings (test results, screenshots, logs, access review evidence).

Then set a simple rhythm. Hold a weekly working session to unblock delivery. Run a monthly executive review to make decisions, approve spend, and accept risk explicitly. If a fix slips, you should see why within two weeks, not two quarters.

How to validate readiness with tabletop exercises and recovery tests

Two tests matter more than most companies admit.

First, run an incident tabletop that forces executive decisions under pressure. Practice who declares the incident, who can shut systems down, who speaks externally, and how legal and comms stay aligned. You're building muscle memory, not theater.

Second, run a recovery test that restores critical systems and data. Don't test the backup tool, test the restore outcome. Time it, document it, and repeat it until results match your business tolerance.

If ransomware is your highest-impact scenario, a board-level view like this board ransomware readiness briefing helps you pre-make the decisions that otherwise burn hours during a crisis.

How to avoid these mistakes without slowing the business down

You don't need a bigger assessment. You need a sharper one.

Start by setting purpose. Pick one business decision the assessment must support, such as budget approval, insurance renewal, audit readiness for FISMA, an acquisition, or a major system change. Then scope to crown jewels and top loss scenarios. After that, require evidence. "We have a process" isn't evidence, but restore logs from your assessment tool and access review results are.

Next, focus on a small set of outcomes. Limit reporting to what changes decisions, such as detection speed, containment speed, recovery confidence, and critical asset coverage. Then assign owners and deadlines, and schedule verification so you can prove closure.

Sometimes your challenge isn't intent, it's capacity or credibility. If you're in transition, short-staffed, post-incident, or facing board pressure, outside leadership can stabilize execution without adding friction. Options like a fractional CISO focused on governance risk and compliance can help you translate findings into a plan leaders can track.

When an independent advisor changes the quality of decisions

Independence helps when internal teams are too close to the work, or when trust is strained. You'll feel that most after an incident, during rapid growth, in M&A, under new regulatory pressure, or when you're hiring your first CISO and need a clear bar.

An advisor can also reduce politics. Instead of arguing tool preferences, you can align on technology-related risks, evidence, and sequencing. Most importantly, you get a neutral voice to translate technical findings into business choices you can defend.

If you want a structured way to bring that support in, you can engage a CISO advisor for targeted assessment scoping, roadmap development, executive reporting on your maturity level, and readiness validation.

FAQs about cybersecurity assessments

How often should you run a cybersecurity assessment?

At least annually, and also after major change (acquisitions, cloud moves, new platforms, or incidents).

What evidence matters most in an assessment?

Proof that controls work, like restore tests, access reviews, vulnerability scanning, penetration testing, logging coverage from an assessment tool, and incident drill results.

How long does a typical assessment take?

Most take 4 to 8 weeks, depending on scope, evidence quality, and how many teams you involve.

What if two security assessments conflict on risk?

Use your crown jewels and loss scenarios as the tie-breaker, then ask for the evidence behind each claim.

How do you prioritize fixes without boiling the ocean?

Start with the top five risks from your risk assessment tied to material loss, then fund the smallest set of changes that reduce them.

How should boards track progress after the assessment?

Require a top-10 risk list with owners, dates, and proof of closure, then review trends quarterly.

Conclusion

A cybersecurity assessment should reduce uncertainty, not create another binder. The biggest money-burning mistakes are simple: treating the work like compliance, measuring activity instead of outcomes, and producing findings with no owners, deadlines, or proof of readiness.

You can fix this by scoping to your biggest loss scenarios, demanding evidence that controls work, and turning results into a 90-day plan with verification. That approach protects uptime, lowers breach impact, and makes spending more defensible.

Your next step is to pick one upcoming decision, such as budget, insurance renewal, audit planning, an acquisition, or a major system change, then use the assessment for risk management to reduce risk for that decision. When you do that, the assessment strengthens your security posture and becomes a business tool, not a report.