AI Governance for Boards: The Questions Management Must Answer Now

AI governance for boards starts with better questions. See what you should ask management now about ownership, risk, controls, and escalation.

AI governance for boards does not mean you manage models, tools, or prompts. It means you have enough visibility to judge whether management is using AI in a way that is safe, legal, aligned to strategy, and owned by someone who can answer for the outcome.

That matters now because adoption is moving faster than oversight. In many companies, AI is already showing up in vendor tools, employee workflows, customer service, analytics, and decision support, often before the board sees a clear picture. As a result, risk can spread quietly across legal exposure, customer trust, cyber security, operations, and brand.

You do not need to become technical. You do need better questions, better reporting, and clear lines of accountability before a problem forces the issue.

Key takeaways boards should keep in view before the next AI discussion

Your role is oversight, not tool selection or model management.

Start with visibility, because you cannot govern what management cannot inventory.

Push for named owners, decision rights, and escalation triggers.

Ask for controls around data use, vendors, testing, and human review.

Require reporting that shows changes, exceptions, and decisions needed.

Treat AI like any other material business risk, with boundaries and accountability.

Why AI governance has become a board issue so quickly

AI is no longer a side topic for innovation teams. It now touches pricing, hiring, customer support, fraud control, content, forecasting, product features, and internal operations. Because of that, it also touches trust, legal exposure, cyber risk, and business continuity.

In many firms, adoption is scattered. One team buys a vendor tool with embedded AI. Another team experiments with public tools. Meanwhile, a product group builds a customer-facing use case. By the time leadership asks for a full picture, the company may already depend on systems it has not classified well.

That is why board oversight matters. You are not trying to slow down useful work. You are trying to make sure speed does not outrun judgment, ownership, or evidence.

The real risk is not just bad models, it is weak oversight

The biggest failure is often not the model itself. It is the absence of clear ownership, plain reporting, and a known path for escalation when something goes wrong.

If management cannot explain where AI is used, who approved it, what data it touches, and when the board gets informed, the exposure is already larger than it looks. In other words, weak governance is the multiplier.

That same discipline shows up in strong board-level reporting tied to business impact, where updates lead to decisions instead of comfort.

Fast adoption creates hidden exposure across the business

Hidden exposure often starts with convenience. Employees paste sensitive data into public tools. Vendors add AI features without clear contract language. Teams rely on generated output without testing accuracy, bias, or error rates. Later, those shortcuts turn into customer harm, poor records, or decisions nobody can defend.

You also face a documentation problem. If use cases are not inventoried and risk-tiered, management cannot show which uses are low-risk assistants and which ones shape real outcomes.

The right questions boards should ask management now

The best board questions do not sound like a compliance audit. They sound like leadership pressing for clarity.

What are we using AI for, and where does it matter most?

Start with visibility. Ask management, "Where are we using AI today, where is it being tested, and which uses touch customers, regulated data, or important decisions?"

A strong answer includes a current inventory, named owners, risk tiers, and a short list of high-impact use cases. A weak answer sounds like, "We are experimenting in a few places."

You also want to know what would hurt the business if it failed. A chatbot typo is one thing. An AI-supported pricing decision, hiring screen, fraud flag, or customer denial is another.

Who owns AI risk, and how do decisions get made?

Next, press on accountability. Ask, "Who approves new AI use cases, who owns the risk after launch, and what gets escalated to the executive team or board?"

A strong answer names people, not functions. It explains decision rights, review paths, and when legal, security, product, risk, and operations come in. A weak answer spreads ownership across everyone.

If ownership is split everywhere, it is owned nowhere.

Boards already know how to set boundaries in other risk areas. The same logic applies to technology risk appetite and escalation thresholds.

How are we managing data, vendors, and control gaps?

Then move to controls. Ask, "What data goes into these tools, which vendors are involved, what protections do contracts give us, and where do we still depend on human review?"

A strong answer covers data sources, sensitive information rules, vendor terms, testing, monitoring, and the controls used when outputs shape real decisions. A weak answer leans on trust in the vendor or broad policy language with no proof of use.

An AI policy without working controls is like a fire alarm with no one assigned to respond.

What would trigger board attention or intervention?

Finally, ask what would make management escalate fast. The answer should cover customer harm, legal concern, high-risk use cases, major model drift, data leakage, material vendor issues, and repeated control failures.

A strong answer gives you thresholds, update timing, and who calls whom. A weak one says, "We will bring it up if it is serious." That is too late. The board should expect the same clarity it would want in board incident response oversight, where escalation starts before confusion turns into delay.

What good AI governance looks like in practice

Good governance is usable. It is not a long policy few people read. It is a working system that gives you visibility, ownership, and a steady reporting rhythm.

Clear inventory, clear ownership, clear reporting

A busy board should be able to spot the basics fast. You want a current inventory of AI use cases, a named owner for each meaningful use, a simple risk tier, and regular updates that highlight changes, exceptions, and decisions needed.

This is the same pattern behind strong board cyber governance practices: clear thresholds, clear owners, and reporting that supports judgment.

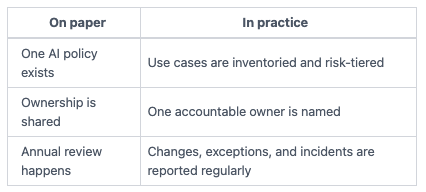

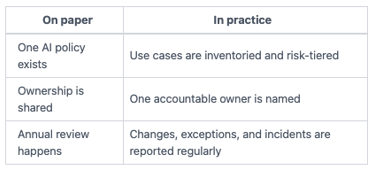

Here is the difference between paper governance and working oversight:

The takeaway is simple. If you cannot see what changed and who owns it, you do not have governance yet.

A simple framework beats a long policy nobody uses

Most companies do not need a heavy program to start. They need a practical operating model. That means approval paths, review criteria, testing rules, basic documentation, incident triggers, and a board reporting cadence.

Keep it short. Keep it clear. Keep it tied to real business use.

How boards can move from curiosity to real oversight this quarter

You do not need a six-month project to get started. You need a short management briefing and a few firm expectations.

Ask for a simple AI risk snapshot before the next meeting

Request a brief update that covers active use cases, planned uses, high-risk areas, key vendors, sensitive data exposure, current policies, known gaps, recent issues, and decisions needed from leadership or the board.

If management cannot produce that in a concise form, that is useful information. It usually means adoption has moved ahead of governance.

Set expectations for reporting before problems force the issue

After that, define what you want to see each quarter. Ask for trend reporting, exception reporting, approvals for high-risk uses, and clear escalation triggers.

Consistency matters more than volume. You want a reporting pattern that helps you compare movement over time, not a different story every meeting.

Common questions boards ask about AI governance

Does every company need a formal AI governance program now?

Not every company needs a large program. However, if your company uses AI in meaningful ways, you do need clear ownership, basic controls, and board visibility that matches the risk.

Should the board approve each AI use case?

Usually, no. Your job is to oversee the framework, the risk boundaries, and the escalation rules. Management should handle routine use cases. The board should get involved when a use case is material, high-risk, or outside agreed limits.

What is the biggest red flag in management's AI update?

Vague answers. If there is no inventory, no named owner, no vendor view, and no clear threshold for escalation, governance is behind adoption.

Strong AI governance for boards starts with three things: visibility, ownership, and better questions. Once you have those, reporting improves, decisions get cleaner, and surprises get harder to hide.

Use your next board or executive meeting to pressure-test management's readiness. Ask where AI is in use, who owns the risk, what controls are real, and what would trigger immediate escalation.

If the answers are crisp, you are on your way. If the answers are fuzzy, that is your signal. The work is not to debate AI in the abstract. The work is to make its use more defensible, before speed turns into avoidable exposure.