AI Governance for Boards Without Slowing the Business

Use ai governance for boards to set clear guardrails, speed decisions, assign ownership, and keep oversight strong without slowing business

Good ai governance for boards should make the business faster, not slower. The point is not to add another approval maze. The point is to set clear decision rights, risk boundaries, reporting, and escalation so teams can move with fewer surprises.

That matters because AI gains are real, and so are the failure modes. You want better speed, lower cost, and smarter decisions. At the same time, you need oversight, accountability, and trust. If the board waits too long, AI spreads without ownership. If the board overreacts, the company builds a brake pedal instead of a governance model.

The right answer is simple. Keep the board focused on boundaries and visibility, and keep management responsible for execution.

Key takeaways

AI governance works best when you set clear ownership, risk tiers, and escalation triggers early.

The board should govern principles, appetite, and reporting, not approve every AI experiment.

A light governance model helps you move faster because teams know which uses can proceed and which need review.

You need a small set of controls first, inventory, named owners, vendor checks, testing, and stop-use triggers.

Board reporting should stay short, trend-based, and decision-focused.

Why AI governance becomes a board issue sooner than most teams expect

AI rarely stays in one corner of the company. It moves from a pilot to customer service, marketing, finance, product, HR, and vendor workflows faster than leadership expects. As a result, the risk stops being technical and becomes business-wide.

That shift is why ai governance for boards becomes urgent early. AI can affect pricing, hiring, customer experience, brand trust, legal exposure, data handling, and operating quality. It can also deepen vendor dependence. If a key model provider fails, changes terms, or mishandles data, your business still owns the outcome.

Boards do not need to run AI projects. They do need visibility into where AI is used, who owns it, what data it touches, and what happens if something goes wrong. That means knowing which uses are low-risk productivity tools and which ones could affect customers, regulated data, or material decisions.

This is similar to how boards think about technology risk appetite and board risk thresholds. You are not managing the tools. You are setting the guardrails that define what is acceptable, what needs escalation, and what deserves board attention.

The real risk is not just bad models, it is weak ownership

Most AI failures do not start with a broken model. They start with blurry ownership.

One team buys a tool. Another team feeds it sensitive data. A vendor changes features. Legal hears about it late. Security assumes someone else reviewed it. Then the board gets a clean story until a customer, regulator, or employee raises a harder one.

If no one owns an AI use case, you do not have governance. You have exposure.

At board level, weak ownership shows up as thin reporting, unmanaged vendor risk, inconsistent review, and slow escalation. Those are governance failures, not coding failures.

What boards need to oversee, and what management should own

The line should stay simple. The board approves principles, risk appetite, reporting expectations, and escalation triggers. The board also challenges material uses, concentration risk, and major exceptions.

Management owns the operating work. That includes the AI inventory, control design, training, testing, procurement, and day-to-day decisions. Management should also decide which uses fit within approved guardrails.

When that split is clear, ai governance for boards becomes workable. When it is not, either the board drifts into operations or management runs ahead without discipline.

Start with a light governance model that matches how your business actually works

The biggest mistake is copying a heavy enterprise framework that does not fit your company. That usually creates delay, not control. Teams either work around it or stop bringing ideas forward.

A better approach is a light model that matches your size, AI maturity, and risk profile. If you are a mid-sized company with a handful of internal use cases, you do not need a sprawling council and a 40-page policy stack. You need a few clear rules, a small review group, and fast escalation when risk rises.

This is where board cyber governance best practices apply well to AI too. Good governance is proportionate. It creates clarity first, then adds structure where the business has real exposure.

Set clear rules for which AI decisions need review, and which do not

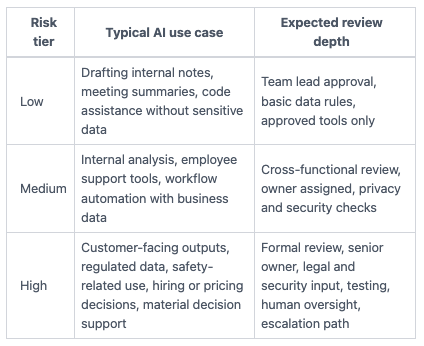

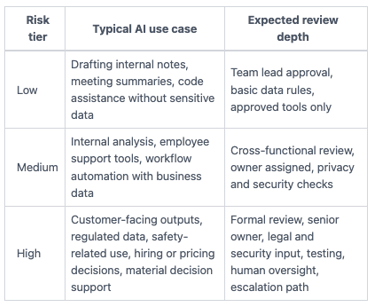

Risk tiering is the fastest way to keep work moving. Low-risk uses can proceed inside preset guardrails. Higher-risk uses should trigger more review before launch.

This simple model helps:

The takeaway is not the labels. It is the speed. Teams move faster when they know the lane they are in.

Pick a small group to coordinate governance without becoming a bottleneck

You do not need a large committee. You need a small working group with clear limits.

In most companies, that means legal, security, technology, data, operations, and one business leader. Meet on a steady cadence. Triage quickly. Record decisions. Escalate only when a use case crosses a defined threshold.

That group should remove confusion, not review every prompt or experiment. Think traffic cop, not tollbooth.

Build the few controls that create confidence and keep work moving

Most companies do not need a giant policy stack first. They need a short list of controls that protect the business while keeping teams productive.

Start with visibility. Then add basic checks. Then tighten where use expands. If you reverse that order, governance becomes paper before it becomes real.

Know where AI is being used, what data it touches, and who is accountable

You cannot govern what you cannot see. So start with a practical AI inventory.

That inventory does not need to be fancy. It should tell you which AI tools or use cases exist, which team owns each one, what data it touches, whether it is customer-facing, which vendors are involved, and what risk tier applies. If a use case has no named owner, it is not approved.

Basic data classification matters here too. Teams need plain rules on what can and cannot go into public or third-party AI tools. Without that, employee use becomes shadow AI, and shadow AI becomes a reporting problem later.

This also improves board visibility. When management can name material use cases and owners, reporting gets sharper. When it cannot, you are relying on assumptions.

Use simple testing, vendor checks, and escalation triggers before problems grow

Testing does not need to be academic. It needs to be useful.

For sensitive use cases, review output quality before launch. Check for bias or fairness when the use case could affect people differently. Review privacy and security when personal, regulated, or confidential data is involved. For vendor tools, confirm what data the provider keeps, how models are trained, what contract terms apply, and how fast the vendor must notify you if something changes.

Human oversight also matters in higher-risk uses. If AI supports a credit, hiring, medical, legal, or customer-impacting decision, someone accountable should review the output before action.

Then set stop-use triggers. For example, pause the use case if outputs drift, the vendor changes terms, sensitive data exposure is found, or the tool starts affecting customers in ways you did not approve. These are the same kinds of incident decision rights and escalation thresholds that help boards avoid confusion in other risk areas.

What good board reporting looks like when AI is moving fast

Boards do not need a long AI lecture. They need a short view of exposure, movement, and decisions.

Useful reporting shows change over time. It shows where AI use is concentrated. It shows control gaps, incidents, exceptions, and pending decisions. Most of all, it shows ownership.

That is the same discipline behind board-ready risk reporting. The board should see what changed, what it means, what management is doing, and where support or approval is needed.

Give the board a short dashboard, not a long AI lecture

Keep the dashboard tight. A strong AI board update can often fit on one page.

Include the number of material AI use cases, the spread across low, medium, and high-risk tiers, the top vendor dependencies, open exceptions, control gaps, incidents or near misses, and any board decisions needed. Add trends, not just point-in-time counts.

If the report cannot show who owns each material issue, it is not ready.

Questions you should ask before the next board meeting

Use a few sharp questions to test whether ai governance for boards is real or mostly implied:

Do you have a current list of material AI use cases, with named business owners?

Which AI uses touch customer data, regulated data, or material business decisions?

What AI activities fall into the high-risk tier, and who approved them?

Which vendors create the most AI dependence, and what happens if they fail or change terms?

What employee AI use is allowed today, and how is that communicated?

What triggers require escalation to the CEO, committee chair, or full board?

What changed since last quarter, and what decision do you need from us now?

If management can answer those cleanly, governance is taking shape. If not, fix visibility before adding more policy.

Frequently asked questions about ai governance for boards

Does every AI use case need board approval?

No. Board approval should focus on material risk, strategy, and policy boundaries. Routine use inside approved guardrails should stay with management. If the board reviews everything, it will slow the business and still miss the real outliers.

How formal should AI governance be for a mid-sized company?

It should match your size, data sensitivity, use cases, and legal exposure. Start simple. Use a small working group, a short inventory, basic controls, and a clear risk tier model. Then add structure as AI use spreads or stakes rise.

Who should own AI governance day to day?

Usually, management should assign a clear coordinator, often in legal, risk, security, or technology, backed by a cross-functional group. The mistake is shared ownership with no decision maker. Coordination needs a name, not a slogan.

Good ai governance for boards is not a brake. It is a way to replace confusion with clear lanes, clear owners, and clear escalation.

This quarter, review your current AI use cases. Assign owners. Define high-risk triggers. Tighten the board dashboard before you add more policy. If you do those four things well, you will gain speed, not lose it, because the business will know how to move without guessing.

Short author bio: Tyson Martin advises boards, CEOs, and executive teams on cyber, AI, technology governance, and oversight. He helps leadership teams clarify decision rights, improve reporting, and make stronger decisions when technology risk starts affecting business risk.

Sources referenced: NIST AI Risk Management Framework (AI RMF 1.0), OECD AI Principles