The 2026 Board Agenda: Why Cyber and AI Oversight Now Move Together

See why cyber and AI oversight now move together in 2026, and what your board should ask to spot risk gaps before they spread.

In 2026, you can't oversee AI well without understanding cyber risk. You also can't oversee cyber risk well without seeing how AI changes systems, data flows, vendors, employee behavior, and business decisions.

That shift matters because the board doesn't own two separate problems. You own one operating picture. If AI use expands faster than governance, your exposure expands with it. If cyber reporting ignores AI, your board sees only part of the risk. That weakens trust, decision quality, and your ability to act early.

You don't need deep technical mastery. You need a clearer line of sight into where AI is used, what it depends on, how it can fail, and who is accountable when it does. That is now a core board duty.

Key takeaways

Cyber and AI oversight now rise or fall together because AI changes your exposure, not only your tools.

Separate reporting creates blind spots around trust, uptime, legal risk, and vendor dependence.

Strong oversight gives you one integrated view of risk, resilience, and business impact.

The board needs clear ownership, escalation triggers, and decision-ready reporting.

Your next step is simple: ask management for one combined cyber and AI view at the next meeting.

If cyber appears in one report and AI in another, your board may miss the risk that sits between them.

Why cyber and AI oversight now rise and fall together

AI is no longer a side project. It is now inside customer products, internal workflows, analytics, support tools, vendor platforms, and employee routines. As a result, AI risk often shows up through familiar channels: security gaps, privacy failures, bad access control, weak vendor terms, and poor governance.

That is why the old split no longer works. You can't treat cyber as infrastructure risk and AI as strategy talk. When AI changes how data moves, who can access it, or what actions get automated, it changes your cyber posture too. It also changes your legal exposure, service reliability, and customer trust.

For boards, the business issue is clear. You are not deciding whether AI is good or bad. You are deciding whether the company can use it with enough control, visibility, and accountability to protect the business while it grows.

AI expands the attack surface, but it also changes how decisions get made

AI adds more than software. It can alter who gets access, which data gets pulled into new tools, how work is approved, and when decisions move from people to systems. That means your risk is no longer limited to a bad actor breaking in. It also includes poor model use, bad data, weak controls, and automation that outpaces oversight.

This is why boards should revisit board technology risk thresholds. If your risk appetite was set before AI touched key workflows, it may no longer reflect the company you are running.

You should also expect management to explain where AI influences material decisions. If that answer is vague, your oversight is already behind the business.

Boards face one risk picture, even when management reports cyber and AI separately

Management often splits these topics across teams. Security reports on threats and controls. Another team reports on AI adoption, use cases, or policy work. That may fit the org chart, but it doesn't fit the board's job.

You care about the combined effect on uptime, revenue, trust, regulatory exposure, and execution. You need one story about where risk is moving, where dependence is growing, and where leadership still lacks clear control. Strong governance questions for directors help close that gap fast.

Where boards still get this wrong

Most failures here are structural. The issue is rarely a lack of effort. The issue is that oversight design has not caught up with how the business now uses AI, data, and vendors.

When that happens, the board sees motion but not control.

The board gets activity updates, but not a decision-ready view of risk

You may get dashboards, project updates, policy summaries, and training reports. None of that is enough if it doesn't tell you what changed, what matters now, and what decision needs board attention.

Busy reporting often hides the real problem. Weak ownership stays buried. Thresholds stay fuzzy. Vendor concentration grows in the background. AI use spreads faster than control testing. Meanwhile, the board hears that teams are "making progress."

Good reporting should help you translate cyber risk to business impact. It should show trends, not trivia. It should connect AI use to access risk, third-party reliance, incident readiness, and material business consequences.

If the report does not support a decision, it is not board-ready.

Ownership gets fuzzy when AI touches many teams at once

AI rarely sits in one box. It touches technology, security, legal, compliance, operations, procurement, and business units. That spread creates confusion fast when decision rights are not settled in advance.

One team may approve a tool. Another may own the data. A vendor may host the model. Security may set controls. Legal may review terms. Then an exception appears, or a failure occurs, and nobody is sure who can stop use, approve risk, or escalate to the board.

This is where leadership gets exposed. Unclear ownership leads to weak data controls, uneven model use, poor exception handling, and slow incident response. Those are governance failures before they are technical failures.

What good combined oversight looks like in 2026

You do not need a larger stack of reports. You need a cleaner operating picture, with clear ownership and clear decisions.

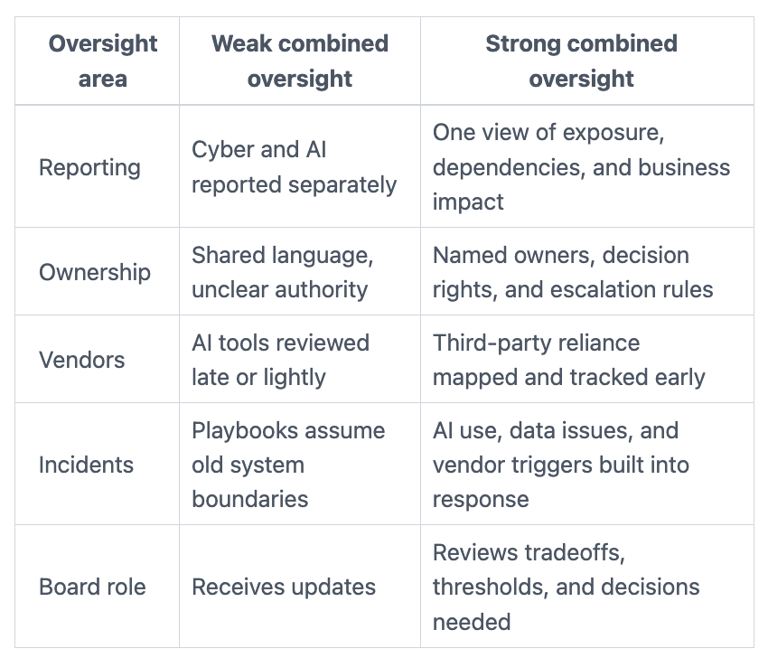

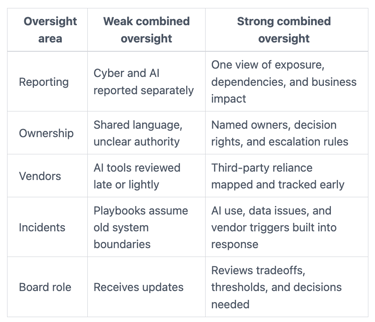

This side-by-side view helps you see the difference.

The takeaway is simple. Strong oversight reduces surprise because it makes the combined risk picture visible before pressure hits.

You ask for one integrated story about risk, resilience, and business impact

Management should connect AI use, cyber exposure, third-party dependence, incident readiness, and control gaps into one board-ready narrative. That does not mean more slides. It means clearer tradeoffs.

For example, if the company is using AI in customer support, underwriting, pricing, fraud review, or product workflows, the board should hear how that changes data handling, identity risk, vendor dependence, outage impact, and trust exposure. This is close to the discipline behind Board Cyber Governance Best Practices, where oversight is tied to decisions and evidence.

You are looking for one answer to one board question: what has changed in our exposure, and what do you need from us now?

You define decision rights before pressure exposes the gaps

Boards should expect management to clarify who approves higher-risk AI use, who owns controls, when exceptions expire, and what triggers board notice. If those rules only exist in people's heads, they will fail when the stakes rise.

This matters most in incident conditions. A vendor issue, data leak, failed control, or harmful output can force fast calls across several teams at once. Your board should know the escalation path before that moment arrives. A practical board playbook for incident governance helps keep those decisions calm and documented.

Good oversight feels boring before a crisis. That is usually a good sign.

The questions your board should ask before the next meeting

Your next move is not another policy review. It is a sharper set of questions that test visibility, ownership, resilience, and decision quality.

Questions that test whether AI oversight is real, not performative

Ask management questions that tie AI use to material business outcomes:

Where is AI already influencing material decisions, customer outcomes, or core workflows?

What data, vendors, and access paths do those systems depend on?

What can fail in a way that harms revenue, operations, trust, or legal position?

Who owns exceptions, model use approvals, and time-limited risk acceptance?

How would leadership know if controls were failing, drifting, or being bypassed?

Questions that reveal whether cyber oversight still matches the company you are becoming

Then test whether your cyber oversight still fits current reality:

Does board reporting reflect actual AI use, or only traditional security topics?

Where are we most dependent on a small number of vendors, platforms, or identities?

Which changes in access, automation, or data movement have raised exposure this year?

What incident thresholds would trigger board notice when AI or vendor failure is involved?

Would the board get early warning on a meaningful shift in combined cyber and AI risk?

Quick answers to common boardroom questions

Does every board need a separate AI committee?

Usually no. Most boards need better oversight design, not another committee. If your current structure can assign ownership, set cadence, and bring material issues forward, that is often enough. The harder problem is clarity.

Is this mainly a compliance issue or a business risk issue?

It is a business risk and governance issue first. Compliance matters, but it is only one part of the picture. Your core duty is to oversee trust, continuity, exposure, and decision quality as the company changes.

How often should the board review cyber and AI risk together?

That depends on materiality, pace of change, and current posture. Many boards should review core signals every quarter, with deeper discussion whenever AI adoption, vendor dependence, or incident exposure changes in a meaningful way.

Cyber and AI oversight are now part of the same board agenda because they shape the same business outcomes. You do not need perfect technical fluency to govern them well. You need an integrated view, clear ownership, and reporting that supports action.

For your next meeting, ask management to bring one combined cyber and AI oversight view. It should show material uses, top dependencies, current gaps, incident triggers, and the decisions that need board attention now. That single change can improve visibility faster than another quarter of separate updates.

Source references

SEC, Cybersecurity Risk Management, Strategy, Governance, and Incident Disclosure rules

NIST Cybersecurity Framework 2.0

NIST AI Risk Management Framework 1.0

Providing plain-English technology oversight to help Boards and CEOs lead with confidence and make defensible risk decisions.

© 2026. All rights reserved.

Navigation

Free Resources

Contact

Stay ahead of your next board agenda

Sign up for Reports & Learnings From the Boardroom. Plain-English AI and cyber governance insights, biweekly. No pitch.

No spam. Unsubscribe anytime. · Or download the Director's AI Question Pack — 25 questions free