Navigating the AI Hype Cycle Without Chasing Noise

Navigating the AI Hype Cycle helps you separate strategic AI bets from noise, so you can improve oversight, value, timing, and control.

Navigating the AI hype cycle means separating moves that change how you compete from moves that only create pressure. For you as a board member, CEO, founder, or executive, the issue is simple. A real AI decision should improve revenue, cost, speed, trust, resilience, or decision quality.

The danger starts when hype outruns ownership, proof, and governance. Then you get rushed pilots, weak reporting, unclear risk, and spending that feels active but changes little. That is where disciplined judgment matters.

Key takeaways for leaders making AI decisions under pressure

Not every fast-moving AI trend is strategic.

Strategic AI trends have a clear business use case, an owner, and a success measure.

Noise often shows up as vague promises, vendor urgency, and missing proof.

You should test AI bets through value, risk, fit, timing, and operating readiness.

The goal is faster decisions with better control, not slower innovation.

Why the AI hype cycle creates confusion at the leadership level

AI creates a rare mix of real opportunity and loud pressure. Because of that, smart leaders still get pulled into weak decisions. Boards hear that peers are moving. Investors ask about strategy. Vendors push hard. Management teams feel they need an answer now, even when the internal picture is still blurry.

That pressure gets worse when visibility is weak. If you can't see where AI is already in use, who approved it, what data it touches, or what value it should create, every new tool can sound urgent. As a result, leadership discussions drift toward headlines instead of choices.

For many teams, the problem isn't lack of ambition. It's lack of decision structure.

The market moves faster than your governance model

AI adoption often moves faster than your operating model can handle. Teams test tools before legal reviews finish. Vendors promise speed before risk owners are named. Budget requests show up before reporting exists. Meanwhile, the board still expects clear oversight.

When decision rights lag, urgency fills the gap. A new AI product lands, and nobody knows who can approve it, who measures it, or who owns the downside if it fails. That is how good intentions turn into fragmented pilots and hidden exposure.

If your governance structure already feels stretched, board cyber governance practices offer a useful model for setting ownership, cadence, and escalation before pressure rises.

Buzzwords hide the difference between real capability and theater

Hype usually arrives in polished language. You hear "transformation," "autonomy," or "intelligence at scale." Yet the basic questions stay unanswered. What problem does this solve? What changes if it works? How will you know?

A weak AI bet often has the same signs:

The use case is vague.

The promise is broad, but the metric is missing.

The demo looks strong, but the operating owner is unclear.

The vendor drives the timing.

The risk review is treated as a delay, not part of the decision.

If you can't explain the business gain, the operating owner, and the failure mode in plain language, you are looking at pressure, not strategy.

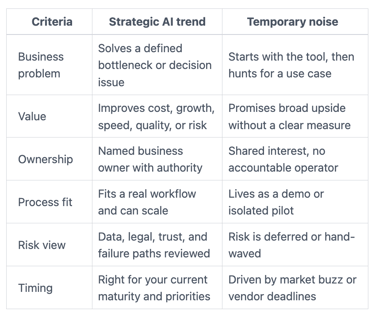

How to tell whether an AI trend is strategic or just temporary noise

You need a simple test that works in real meetings. Start with business value, then check durability, then check readiness. That sequence keeps you from mistaking novelty for advantage.

This quick comparison helps.

The pattern is simple. Strategic trends survive contact with operations. Noise depends on excitement.

Start with the business problem, not the tool

A strong AI decision begins with a business issue you already care about. It may be a slow claims process, weak forecasting, high service costs, delayed underwriting, or poor knowledge access inside the company. The tool comes later.

That matters because tools can look impressive while solving nothing important. You want to know which workflow improves, which decision gets better, and what metric should move. If the answer is fuzzy, stop there.

This is the same discipline you use when setting tech risk thresholds. You define what the business can tolerate, what matters most, and what tradeoffs are acceptable. AI should go through the same filter.

Look for durable value, not short-term excitement

A strategic trend has staying power because it can move from pilot to repeatable use. That means the use case appears more than once, the process can absorb it, and the outcome can be measured over time.

Look for signals such as a repeatable workflow, a manageable control model, clear adoption steps, and a path to scale. You also want to know whether the value lasts after the first excitement fades. Public interest is not a business case.

By contrast, noise usually depends on novelty. The pilot wins attention, but there is no clear next stage. The demo impresses, but the workflow still depends on workarounds. The team celebrates activity, while the business outcome stays unchanged.

Check whether your company is actually ready to use it well

Even a valid trend can be the wrong move for you right now. Readiness matters. Poor data quality, weak process discipline, thin legal review, missing reporting, and unclear ownership can turn a good idea into an expensive distraction.

Keep the readiness check plain. Ask whether your data is usable, your process is stable, your workforce can adopt the change, and your leaders can monitor results. Also ask whether risk review is built in early enough to shape the decision rather than slow it after the fact.

When reporting is weak, leadership often approves AI before it can see what is working. Decision-ready cyber metrics and board reporting offer a useful frame for what good visibility looks like.

The leadership questions that expose weak AI bets early

A solid AI discussion should surface value, ownership, execution, risk, and oversight in minutes. You do not need technical detail first. You need clear answers first.

Questions about value, ownership, and execution

Use direct questions that make weak cases visible:

What problem are you solving, in business terms?

How will you measure success in 90 days and 12 months?

Who owns the outcome, by name and role?

What changes in operations if this works?

What process, decision, or bottleneck gets better?

What happens if adoption stalls?

These questions help you separate a real operating move from a hopeful experiment. They also stop the common slide from curiosity into commitment.

For boards and committees, this same style of challenge works well in broader oversight settings. Board questions exposing cyber blind spots show how direct questions improve accountability without dragging you into technical weeds.

Questions about risk, trust, and oversight

AI decisions also need plain questions about downside:

What data is involved?

How are outputs reviewed before they affect customers, staff, or money?

Where could error or bias cause harm?

What vendor dependence are you creating?

What happens if the model is wrong at scale?

What should come back to the board, and what stays with management?

These questions matter because trust is part of the business outcome. If a tool saves time but creates false answers, poor decisions, or customer harm, you did not improve the business. You shifted the risk.

What good AI judgment looks like in practice

Good judgment is steady. You do not say yes to everything. You also do not freeze while the market moves. You choose where AI matters most, set boundaries, and build enough control to move with confidence.

Run small tests, but govern them like they matter

Disciplined pilots are useful because they create proof without forcing a full commitment. Still, a pilot only helps if it has a tight scope, limited data exposure, a named owner, and a clear success measure.

At the end, you need a decision. Stop it, expand it, or redesign it. Too many companies skip that step and leave half-formed pilots scattered across teams. Then experimentation becomes noise at scale.

Build an AI decision rhythm your board can trust

Your leadership team should report AI use the same way it reports other material technology issues. You need to see where AI is in use, what value it is creating, what risks changed, who owns each use case, and what needs a decision now.

You also need escalation rules before something breaks. If an AI-enabled process harms customers, exposes sensitive data, or creates a material control issue, the path upward should be clear. Board incident response oversight is a good reminder that leadership loses time when authority and reporting are vague.

A strong rhythm lowers heat. It also makes your choices more defensible.

FAQ: What leaders still ask when the AI story sounds convincing

Are you falling behind if you wait?

Not if you are waiting for a sharper decision. Delay becomes a problem when it hides indecision. A short, structured review is different. It helps you move with purpose instead of reacting to noise.

How much AI governance is enough?

You need enough governance to know who owns the use case, what data is involved, how value is measured, and when risk escalates. Beyond that, keep it practical. The goal is control that supports action.

Should every AI project go to the board?

No. Most should stay with management. The board should see the uses that touch material risk, sensitive data, major spending, brand trust, regulated activity, or meaningful strategic change.

How do you push back on vendor pressure without slowing the business?

Ask for proof in your terms. Request the use case, operating owner, success metric, data impact, and path to scale. Strong vendors can handle that. Weak ones rely on urgency.

The next move is simple. Review your current AI efforts and sort them into three groups: strategic, experimental, and noise. Then assign owners, set success measures, and ask for reporting that shows value, risk, and readiness.

That is how you keep momentum without losing control. Navigating the AI hype cycle is not about cynicism. It is about disciplined judgment before the next budget review, board discussion, or vendor pitch forces a rushed answer.

Providing plain-English technology oversight to help Boards and CEOs lead with confidence and make defensible risk decisions.

© 2026. All rights reserved.

Navigation

Free Resources

Contact

Stay ahead of your next board agenda

Sign up for Reports & Learnings From the Boardroom. Plain-English AI and cyber governance insights, biweekly. No pitch.

No spam. Unsubscribe anytime. · Or download the Director's AI Question Pack — 25 questions free