AI Governance for Boards Before Risk Outruns Oversight

AI governance for boards helps you spot where AI shapes risk, ownership, and decisions before weak oversight turns into customer, legal, or board trouble.

AI governance for boards is not about becoming technical. It is about making sure AI use does not move faster than oversight, accountability, and risk visibility.

If you cannot see where AI is in use, who owns it, what decisions it shapes, and how failure would show up, risk will outrun governance. That gap usually appears before the company has a formal AI problem on paper. It shows up in hiring choices, pricing moves, customer interactions, forecasts, vendor workflows, and internal decisions that start to carry more weight than anyone intended.

That is why this belongs in the boardroom sooner than many leaders expect. You do not need to inspect models. You do need a clear view of where AI changes business risk, where judgment can fail, and where accountability gets blurry.

The practical test is simple. Can management show you where AI is used, who approves it, how it is monitored, and what would trigger escalation to the board?

Key takeaways for directors who need a fast read

You need a current map of where AI is in use, including internal tools, customer-facing systems, vendor products, and live pilots.

You should know which AI uses influence high-impact decisions, such as customer outcomes, revenue, hiring, safety, or legal exposure.

Clear ownership matters more than broad enthusiasm. Every meaningful AI use case needs an executive owner, approval path, and review cadence.

Board-level reporting should show exposure, change, incidents, near misses, and the business effect of getting AI wrong.

Weak visibility is the early warning sign. If management cannot name AI uses clearly, governance is already behind.

Before adoption expands, fix the gaps in ownership, escalation, and reporting.

Why AI becomes a board issue before it looks like one

AI often starts as a tool choice. Then it becomes a decision system. That shift is why boards get pulled in, even when the technology seems small at first.

Once AI starts shaping real outcomes, it affects trust, reputation, legal exposure, and operating quality. A weak answer from a chatbot may be annoying. A flawed output that changes pricing, hiring, underwriting, or fraud response is different. That is no longer a tech issue alone. It is a governance issue.

AI changes how decisions get made, approved, and challenged

AI can quietly shape more decisions than you think. It may score job candidates, draft customer replies, rank leads, flag suspicious transactions, or suggest actions in security operations.

Those uses can save time. They can also weaken human review if teams trust the output too easily. When that happens, the real risk is not only a bad result. The risk is that nobody can explain why the result happened, who approved the use, or how the company would catch drift before harm spreads.

The real problem is often weak ownership, not advanced technology

In many companies, AI risk grows through blur, not through complexity. One team buys an AI feature through a vendor. Another starts a pilot. A third uses a public tool in daily work. Soon, AI is influencing decisions across the business, but nobody owns the full picture.

That is why board cyber governance best practices matter here too. Strong oversight starts with named owners, clear thresholds, and a reporting rhythm that makes drift visible.

What directors need to see before AI risk gets ahead of them

Good AI oversight is not a thick policy binder. It is a short set of decision-useful views that help you see exposure early.

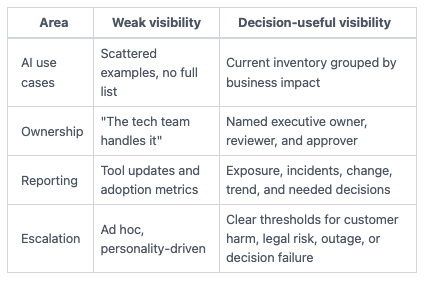

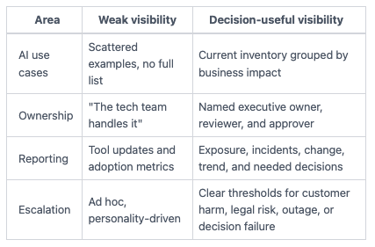

This table shows the difference between weak visibility and board-ready visibility.

The takeaway is simple. You cannot govern what you cannot name.

You do not need model math. You need line of sight, named owners, and rules for when AI risk comes upstairs.

A clear map of where AI is being used and why it matters

Start with an AI use-case map. Ask management to show every material use, not only the high-profile ones. That includes internal productivity tools, customer-facing uses, embedded vendor AI, decision support tools, and pilots that could become production systems.

Then group those uses by business impact. Which ones touch customers? Which ones shape money, access, hiring, safety, or regulated decisions? Which ones rely on vendor claims that the company has not tested itself?

You already do a version of this when setting technology risk appetite. AI belongs in that same frame. The question is not, "Are we using AI?" The question is, "Which AI uses create business harm if they fail or drift?"

Named owners, approval rules, and escalation thresholds

Next, ask who owns policy, who approves new use cases, who monitors outcomes, and when issues must be escalated.

Board oversight and management execution should stay distinct. You approve the guardrails. Management runs the process inside them. That means management should own day-to-day approvals, testing, and monitoring. The board should own the threshold logic, the reporting expectations, and the oversight of material exceptions.

If ownership is split across legal, IT, security, product, and operations with no lead owner, that is a governance gap.

Reporting that shows exposure, drift, and decision impact

Board reporting on AI should not read like a product update. It should show where exposure sits, what changed, and what requires a decision.

Good reporting includes major AI use cases, risk tiering, model or vendor changes, incidents or near misses, open control gaps, and any approvals management wants from leadership. It should also show trend, not only snapshots. If a use case has moved from pilot to customer impact since last quarter, that matters.

A useful model is the same discipline used in board reporting for cybersecurity programs. Focus on business consequence, trend, and choices, not technical theater.

Where AI governance usually breaks down

Most boards do not get blindsided because nobody cared. They get blindsided because adoption spread faster than the oversight model.

That breakdown often starts during growth. Teams move fast, vendors add AI features, and pilots look harmless. Then a small use becomes a real dependency.

Pilot projects grow up before governance does

A pilot can begin as a harmless test. Then it helps answer customer questions, rank cases, route tickets, or guide staff decisions. After a few months, people trust it. Workflows adapt around it. Yet no one has revisited the risk, the owner, or the escalation path.

That is how boards get false comfort. The company still talks about "experiments," while the business is already relying on them.

Vendor AI creates hidden exposure the board never meant to accept

You may inherit more AI risk through vendors than through your own teams. Software providers, outsourced services, support platforms, and workflow tools now ship AI features by default.

If nobody asks where vendor AI sits in key processes, you may accept risk without meaning to. Vendor claims are not the same as oversight. You still need to know what data those tools touch, what decisions they influence, and what happens when outputs are wrong.

That is also why AI issues can turn into incident issues fast. If a vendor model creates customer harm, access errors, or service disruption, your board still needs the kind of clarity described in board incident response oversight.

The questions your board should ask this quarter

If you want sharper oversight now, start with questions that test visibility, ownership, and decision usefulness. You are not trying to run the program. You are trying to see whether governance is ahead of adoption, or behind it.

Questions that test visibility, accountability, and control

Where are we using AI today, by business function and vendor dependency?

Which uses could materially affect customers, revenue, operations, hiring, or legal exposure?

Who approves new AI use cases, and who owns monitoring after launch?

What AI use moved from pilot to operational dependency since last quarter?

What incidents, near misses, or exceptions have occurred?

Where are we relying on vendor claims rather than internal review?

If your audit committee needs a sharper challenge model, these audit committee cyber risk questions offer a strong pattern for testing ownership and evidence.

Questions that reveal whether reporting is decision-useful

What does the board need to decide now, if anything?

What thresholds trigger escalation to the board chair, committee chair, or full board?

Where is management least confident in current AI oversight?

What must be fixed before AI use expands further?

If the answers are vague, reporting is probably behind the risk.

FAQ: Practical concerns directors raise about AI governance

Does every company need a formal AI policy?

Not every company needs a long standalone policy right away. But every company using AI in meaningful ways needs clear rules, named owners, approval paths, and reporting expectations.

How is AI governance different from cyber oversight?

Cyber oversight focuses on threats, control strength, incident readiness, and business disruption. AI governance adds decision quality, output risk, use-case approval, model drift, and human review.

Does vendor AI count as part of board oversight?

Yes. If a vendor tool uses AI and affects your data, customers, workflows, or decisions, it counts. Outsourced risk is still your business risk.

When should the board seek outside help?

Bring in outside help when ownership is unclear, reporting is weak, adoption is moving fast, or the board cannot tell where AI risk is concentrated. That is often before a failure, not after.

Boards do not need perfect technical fluency. They need clear sightlines. If you can see where AI is used, who owns it, what decisions it shapes, and how failure would surface, you can govern it with discipline.

If you cannot see those things, risk is already moving faster than oversight.

Before your next board meeting, ask management for three items: an AI use-case map, a named ownership model, and clear escalation thresholds. That will tell you more than another high-level AI update ever will.

Tyson Martin advises boards and CEOs on cyber risk, technology governance, AI oversight, and decision clarity under pressure. His work focuses on helping leaders see what matters, assign ownership, and make stronger decisions with fewer surprises.

Providing plain-English technology oversight to help Boards and CEOs lead with confidence and make defensible risk decisions.

© 2026. All rights reserved.

Navigation

Free Resources

Contact

Stay ahead of your next board agenda

Sign up for Reports & Learnings From the Boardroom. Plain-English AI and cyber governance insights, biweekly. No pitch.

No spam. Unsubscribe anytime. · Or download the Director's AI Question Pack — 25 questions free