AI Governance for Boards That Leads to Better Decisions

AI governance for boards helps you set clear decision rights, spot material risk, and challenge management without slowing the business.

AI governance for boards should help you decide, not bury you in policy. You need a clear way to judge where AI is fine, where risk is too high, who owns the call, and when an issue comes back to the board.

That matters now because AI is moving faster than most oversight models. Meanwhile, management updates are often vague, vendors make bold claims, and boards get asked to bless pilots and tools without a clean decision model. The result is predictable, speed without clarity, risk without ownership, and oversight without traction.

A useful framework fixes that. It gives you a simple way to sort routine uses from material ones, challenge management without slowing the business, and make decisions you can defend later.

Key takeaways for boards under pressure

AI governance for boards should match business use, not sit as a generic policy stack.

You need clear decision rights before AI risk shows up, not after a problem surfaces.

The board should focus on material AI uses, not approve every tool or experiment.

Risk thresholds should be visible, simple, and tied to business impact.

Reporting should tell you what changed, what matters, and what decision is needed.

A steady review rhythm beats a once-a-year policy exercise.

What a board-level AI governance framework is really for

A board-level framework exists to improve judgment. It should help you move faster where risk is low, slow down where impact is high, and assign accountability before the room gets tense.

In business terms, that means better speed, cleaner ownership, and stronger defensibility. It also supports trust. Customers, regulators, investors, and employees will care less about your AI policy library than about whether you can explain how decisions were made, who approved them, and what controls were in place.

Good governance creates clarity. Bad governance creates friction.

You can feel the difference quickly. Clear governance helps management know what it can approve, what it must escalate, and what evidence the board expects. Friction-heavy governance does the opposite. It adds committees, vague principles, and slides that sound careful but don't help anyone decide.

This matters because AI is no longer a side issue. It can affect revenue, customer treatment, legal exposure, operating reliability, and brand trust. If your governance model can't sort those stakes cleanly, you're left with noise.

The goal is better decisions, not more paperwork

The board doesn't need to manage models or review prompts. You need a way to understand which AI decisions are routine, which are strategic, and which could create material harm.

That distinction changes everything. A low-risk internal productivity tool is not the same as AI that shapes pricing, hiring, underwriting, medical advice, fraud decisions, or customer communications. One may stay with management. The other may need clear board visibility.

If your framework doesn't help you separate low-risk use from high-impact use, it isn't governance. It's paperwork.

Useful oversight is decision-focused. Checkbox oversight is comfort-focused. One helps you act. The other helps you feel busy.

Why many AI oversight efforts fail before they start

Most failures are plain. You don't have a shared definition of material AI use. Ownership is blurry. Vendors drive adoption faster than leadership sets guardrails. Reporting is fragmented. Board work and management work get mixed together.

That last point causes real damage. When the board tries to supervise everything, it slows the business. When the board stays too high-level, material uses slide through with weak challenge.

You also see failure when AI gets treated as only a legal or technical issue. It isn't. It's a business decision issue with legal, operational, trust, and technology consequences. If no one owns the full picture, risk drifts.

The five parts of ai governance for boards that actually work

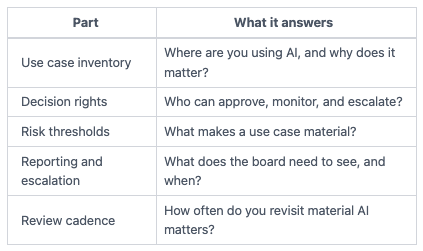

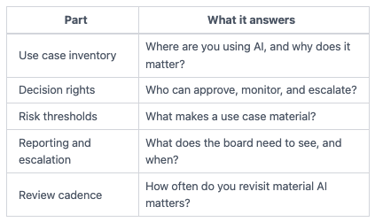

You don't need a heavy model. You need one that matches how boards decide. A practical framework has five parts.

This simple table shows the structure.

Together, these parts give you a stable operating picture. They also fit well with broader board cyber governance best practices, because the mechanics are the same, clear thresholds, clear ownership, and repeatable review.

Start with where AI is used and why it matters

You can't govern what you can't see. So the first step is a current view of AI use across the business.

That inventory should include internal productivity tools, customer-facing features, automated decision support, and third-party platforms with embedded AI. However, don't build it like a technical asset register. Build it around business impact.

For each use, ask four things. What is the business purpose? Who owns it? What data does it touch? What happens if it fails or behaves badly?

That gives you a usable map. It also shows where AI is already affecting decisions, customer outcomes, or core operations. Without that map, you end up governing the idea of AI instead of the uses that matter.

Set clear decision rights before risk shows up

Once you know where AI is used, define who decides what. This is where many companies lose control.

Management should own execution. That includes approving low-risk pilots, setting controls, monitoring outcomes, and handling day-to-day exceptions. The board should approve the governance model, the risk posture, and the escalation rules for material uses.

Blurred ownership is one of the fastest ways to create avoidable risk. If product assumes legal owns it, legal assumes technology owns it, and technology assumes the vendor covered it, nobody is accountable when the issue lands.

Clear decision rights fix that. They also support better challenge in the room. When you know who owns the use case, you know who should answer for design, controls, and outcomes.

Use risk thresholds that tell you when the board must lean in

Not every AI use belongs in the boardroom. If you try to review every tool, you turn governance into delay.

Instead, set materiality triggers. A use case should rise to board attention when it could cause customer harm, affect regulated data, shape financial reporting, create legal exposure, introduce operational dependence, or carry major brand risk.

Those thresholds should be simple enough to apply in a meeting. If management can't tell whether a use falls inside or outside board oversight, your model is too vague. This is the same logic used in boards setting technology risk appetite, where thresholds matter more than slogans.

Ask for reporting that supports action, not theater

Board reporting should help you decide. It should show your top AI use cases, accountable owners, key risks, incidents or near misses, control gaps, policy exceptions, vendor concentration, and the decision needed from the board, if any.

Weak reporting looks familiar. Generic heat maps. Adoption claims with no control evidence. Dashboards without trend lines. Big language about innovation, but no clear line between routine use and material exposure.

Strong reporting is shorter and sharper. It tells you what changed, what stayed exposed, and whether action is needed. If you're refining that discipline, this guide to cyber reporting focused on business outcomes is a useful parallel.

If a board packet can't tell you what decision is needed, it isn't board-ready.

Build a review rhythm that can keep up with change

Annual policy review is too slow for AI. The business can adopt new tools, change vendors, or expose new data flows in a quarter.

A practical cadence works better. Management should review active, material deployments more often. The board should review material AI matters at least quarterly, or faster if adoption is moving quickly or incidents occur.

The point isn't constant escalation. The point is a stable rhythm that catches drift before it becomes a surprise. That same thinking matters in board incident response oversight, because calm review beats reactive review every time.

How to use the framework in the boardroom without slowing the business

A good framework should make the board more useful, not more intrusive. It gives you a way to challenge management, support responsible speed, and stay out of routine execution.

Use it during strategy discussions by asking whether AI use aligns with business goals and risk posture. Use it during audit and risk discussions by checking thresholds, exceptions, and trend lines. Use it during operating reviews by testing whether ownership is real or just implied.

The questions that lead to stronger oversight

You don't need a long script. You need a few direct questions:

Which AI uses are material to the business right now?

Who owns each one, by name and role?

What are our escalation thresholds?

What would make us pause deployment?

Where are we relying too heavily on vendor assurances?

These questions work because they force management to move from broad claims to accountable answers. They also align with the discipline behind strong audit committee cyber risk questions, which push reporting toward decisions rather than updates.

What good looks like when management brings AI to the board

A clean board update is easy to picture. It starts with the business objective. Then it states the scope of use, the accountable owner, the risk summary, the controls in place, and any remaining concerns.

After that, it gets specific. Does management want approval, acknowledgement, funding, or a policy decision? What happens if the board delays action? What has changed since the last review?

When those basics are present, you can govern without slowing the business. When they are missing, discussion drifts into reassurance, jargon, or vendor language.

Common questions boards ask about AI governance

Does every AI tool need board oversight?

No. The board should focus on material uses, clear decision rights, and visible thresholds. Management should handle routine experimentation within approved guardrails.

Who should own AI governance inside management?

Ownership should be cross-functional, but not vague. Legal, security, technology, data, risk, and business leaders may all play a part. Still, one executive should be clearly accountable for the operating model and escalation path.

How often should the board review AI risk?

Review cadence should track business materiality and speed of adoption. Quarterly review is a sound baseline for material AI matters. Faster change or higher exposure may call for more frequent updates. Steady review is better than last-minute review.

Your next move is simple. Pressure-test your current AI oversight model before the next board meeting.

Start with three actions. Identify your material AI uses. Define the escalation thresholds that bring issues back to the board. Then require one management report built for decisions, not theater.

That won't solve every AI issue in one quarter. It will, however, give you something far better than policy volume, clarity. And clarity is what lets you move with speed, challenge management well, and make decisions you can defend.

Tyson Martin advises boards and CEOs on technology, cyber risk, AI oversight, and operational trust. His work focuses on clearer decision rights, stronger reporting, and fewer avoidable surprises.