Audit Committee Questions: Are You Seeing Real Risk?

A set of pointed questions to help committees differentiate between "activity" (team busyness) and "KRIs" (Key Risk Indicators) that indicate material business exposure.

You can sit through a full audit committee packet, hear a long cyber update, and still not know your real business exposure. That happens when reporting shows motion, effort, and project status, but not whether risk is rising, falling, or crossing a line that matters.

For audit committees, the core issue is simple. Activity can look impressive while material exposure grows in the background. You need Audit Committee Questions that test whether a metric shows work completed or risk that could hurt revenue, operations, customers, compliance, or trust.

The goal is decision usefulness. You need to tell the difference fast, ask sharper questions, and insist on reporting that helps you act.

Key takeaways for audit committees under pressure

Activity is not the same as exposure.

KRIs should show business impact, trend, and threshold.

Weak reporting hides what needs a decision.

Good questions expose ownership, tolerance, and escalation gaps.

The committee should ask for signals that support action, not comfort.

Why busy reporting can hide material business exposure

Audit committees often get dashboards full of counts. Alerts reviewed. Patches applied. Training completed. Meetings held. Projects launched. Those numbers can show effort, but they rarely tell you whether the business is safer.

That gap matters because boards govern exposure, not busyness. If your reporting stays at the level of work performed, you can miss growing concentration risk, weak recovery capability, or rising exposure in critical systems. By the time the risk becomes obvious, the business may already be dealing with outage, legal cost, customer harm, or public loss of trust.

Strong oversight depends on clearer framing. If you haven't defined thresholds, reporting gets softer. If you haven't named owners, accountability gets blurred. If you haven't tied technical conditions to business consequence, the committee can't judge what matters now.

What counts as activity, and why it can feel falsely reassuring

Activity metrics are the byproducts of running a team. They include patch counts, tickets closed, awareness completion rates, and incidents reviewed. Management needs many of these measures. You do not need to treat them as board-level proof that risk has gone down.

Volume feels reassuring because it suggests motion. More work must mean more control, right? Often it doesn't. A team can close thousands of tickets while one critical identity gap remains open. It can complete training across the company while high-risk users still face rising credential attacks. It can launch major projects while key recovery tests keep failing.

That is why committees confuse effort with control. The packet is full, the charts move, and the room sounds busy. Yet the business may still be exposed where it hurts most.

What a true KRI looks like when the business is actually exposed

A KRI should help you judge material exposure. It should connect to meaningful loss, disruption, legal or regulatory impact, customer harm, or damage to trust. It should also show trend, threshold, and decision relevance.

For example, "patches applied" is activity. "Percent of critical internet-facing assets exposed beyond tolerance for more than 15 days" is closer to a real KRI. One count shows work. The other shows exposure against a limit.

This is where setting board technology risk appetite becomes practical. If you don't know the acceptable range, you can't know when a KRI should trigger escalation.

If a metric doesn't help you decide, escalate, or challenge ownership, it doesn't belong in the board packet.

The Audit Committee Questions that quickly expose the difference

You don't need more technical detail in committee meetings. You need questions that force translation into business consequence and decision logic.

Questions that test whether a metric shows effort or exposure

Use these questions when a metric looks active but feels vague:

What business loss would this metric warn us about?

If this number worsens, what happens to revenue, operations, customers, or compliance?

What decision would this metric change?

What threshold would trigger escalation to this committee or the full board?

These questions cut through vanity metrics fast. If management can't connect the number to harm, threshold, and decision, you are likely looking at an activity measure, not a KRI.

A useful follow-up is direct and fair: "Show us the metric that tells us whether exposure is inside or outside tolerance." That reframes the conversation toward oversight instead of status reporting.

Questions that reveal whether thresholds, trends, and ownership are clear

Once a metric is tied to risk, test whether it is governed well.

What is the acceptable range for this indicator?

How long has it been moving in the wrong direction?

Who owns reduction of this risk, by name and role?

What is the plan if this crosses tolerance?

Weak answers here often reveal the real governance problem. The issue may not be the metric itself. The issue may be that no one agreed on risk appetite, no one defined escalation, or no executive owns the outcome.

If thresholds are fuzzy, the committee gets surprise. If owners are vague, the committee gets drift. If trend is missing, the committee gets a snapshot with no story.

For a broader set of audit committee cyber risk questions, you should look for the same pattern every time, exposure, threshold, owner, next move.

Questions that connect cyber and technology indicators to business consequence

Technical exposure matters only when you can translate it into business effect.

Which business processes would fail first if this risk materialized?

Which critical vendors would make this worse?

How much downtime or disruption could this create?

How prepared are we to operate through it?

These questions bring resilience into the room. They also help you test whether management has done the hard work of mapping systems, vendors, recovery limits, and fallback paths to real business operations.

This is also where incident readiness matters. A rising KRI without a workable response plan is worse than a rising KRI with tested decision paths. Good committees connect both. That is the heart of board incident response oversight.

What good KRI reporting looks like in the board packet

You should expect reporting that tells you what changed, why it matters, and what decision may be needed. That means smaller sets of indicators, not larger dashboards.

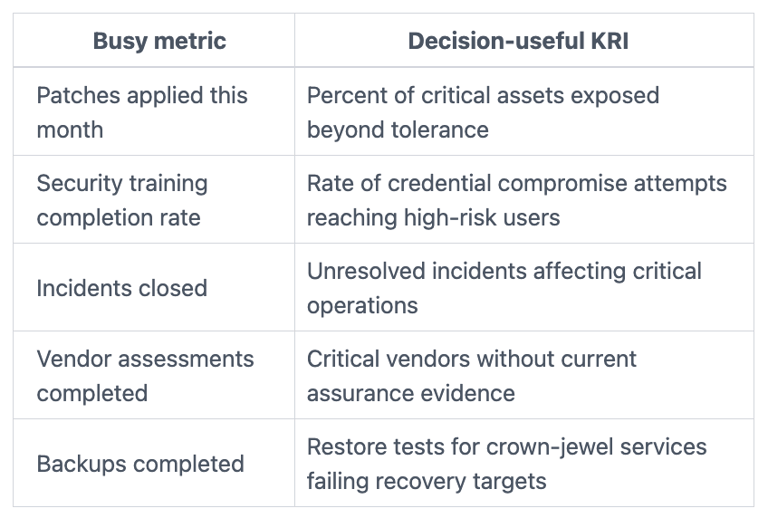

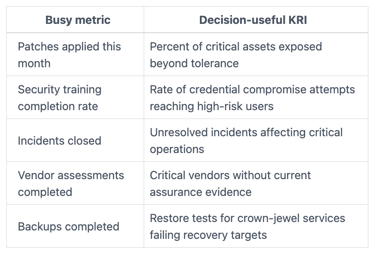

Use a simple side by side view of busy metrics versus decision-useful KRIs

A visual comparison makes the difference clear.

The takeaway is simple. The left column tracks work. The right column tracks exposure that may require action.

Show only the indicators that answer, what changed and why it matters

Strong board reporting should include five things for each KRI: trend, threshold, business impact, owner, and next action. If one is missing, the committee has less than it needs.

That is why board reporting for cybersecurity should stay tight and decision-shaped. Long dashboards with no escalation logic create delay. A smaller set of KRIs tied to material exposure creates focus.

You do not need twenty metrics. In most cases, you need a focused set tied to the few failures that could hurt the business most.

How to use these questions in your next audit committee meeting

You can improve signal quickly, even if your current reporting is still too operational.

Start with three questions if the reporting is still too operational

If time is tight, ask these three first:

Which three indicators best show material business exposure today?

What thresholds require escalation to this committee or the board?

Where do we lack enough visibility to judge risk confidently?

Those questions do two things. First, they force management to prioritize. Second, they expose blind spots without dragging the committee into technical detail.

Ask management to replace noise with a smaller set of board-level KRIs

Before the next quarter, ask for fewer metrics and better framing. Request explicit thresholds, clear business impact, named owners, and a short statement of what action would follow if the number worsens.

This aligns with broader board cyber governance best practices, because the goal is not more reporting. The goal is oversight that works under pressure.

Frequently asked questions audit committees often raise

Can a metric be useful even if it is not a KRI?

Yes. Activity metrics still matter for management. They help run the program, allocate staff time, and track execution. The mistake is bringing them to the committee as if they prove reduced business exposure.

How many KRIs should an audit committee review regularly?

Usually a focused set is enough. Many committees can govern well with roughly six to ten board-level KRIs tied to material exposure. More than that often weakens attention and muddies escalation.

What if management cannot define thresholds yet?

Treat that as a governance issue, not a reporting detail. Missing thresholds often mean there is no shared view of tolerance, ownership, or escalation. That gap deserves attention now, because the committee cannot judge risk confidently without it.

Busy teams can produce endless updates. That doesn't help you if you still can't see material exposure. Your job is not to reward motion. Your job is to insist on KRIs that show trend, threshold, ownership, and business consequence.

Use these Audit Committee Questions in your next meeting. Challenge any metric that can't explain what harm it warns about, what line it could cross, and what decision it should change. When reporting gets sharper, oversight gets calmer, and your decisions get more defensible.

Tyson Martin advises boards and CEOs on cyber risk, technology governance, AI oversight, and operational trust. He helps leadership teams turn weak reporting, unclear ownership, and rising exposure into clearer decisions and stronger oversight.

Providing plain-English technology oversight to help Boards and CEOs lead with confidence and make defensible risk decisions.

© 2026. All rights reserved.

Navigation

Free Resources

Contact

Stay ahead of your next board agenda

Sign up for Reports & Learnings From the Boardroom. Plain-English AI and cyber governance insights, biweekly. No pitch.

No spam. Unsubscribe anytime. · Or download the Director's AI Question Pack — 25 questions free