Why AI Governance for Boards Is Now a Leadership Issue

Learn why AI governance for boards is now a leadership issue, so you can clarify ownership, oversight, and reporting before risk outruns you.

AI governance for boards is no longer a future topic, and it isn't an IT side project. If AI can shape pricing, hiring, customer service, underwriting, forecasting, fraud controls, or product decisions, it already affects leadership outcomes.

That changes the board's job. You don't need to approve every tool or prompt. You do need clear ownership, clear boundaries, and clear reporting on where AI affects revenue, trust, legal exposure, operational continuity, or strategic speed.

Many companies now use AI without calling it a formal AI strategy. It shows up in vendor platforms, workflow tools, copilots, analytics, and automated decisions. So if your board still treats AI as a narrow technology topic, you're likely governing it too late. The issue now is leadership judgment, not technical curiosity.

Key takeaways leaders can use before the next board meeting

AI governance belongs in board oversight when AI affects money, trust, legal exposure, customer outcomes, or core operations.

Weak ownership is the fastest path to surprise. IT, legal, product, HR, and operations may each own a piece, while no one owns the whole.

Vendor tools create the same accountability problem as internal tools. Buying AI from a supplier doesn't transfer responsibility.

Good oversight is simple. You need named ownership, use boundaries, risk tiers, escalation rules, and board-ready reporting.

Busy updates don't help. You need visibility into material use cases, risks, exceptions, and what decisions need leadership attention.

Before the next meeting, ask where AI is already in use, who approves higher-risk use, and what incidents or near misses have already surfaced.

Why AI moved from a technology topic to a boardroom issue

AI used to sound optional. Now it sits inside the business, often below the level of strategy decks and above the level of board visibility. It writes customer messages, ranks applicants, flags fraud, supports service agents, drafts code, and shapes forecasts. As a result, leadership exposure can grow before leadership even labels it "AI."

That is why AI governance for boards now belongs beside other enterprise risks. You are not being asked to manage models. You are being asked to oversee business consequences, decision rights, and accountability.

AI decisions now touch revenue, risk, and reputation

When AI affects pricing, service quality, claims decisions, credit decisions, hiring screens, or fraud alerts, it affects more than system output. It affects who gets served, who gets rejected, how fast work moves, and how much trust you keep.

Those are leadership outcomes. If a system makes poor recommendations, biases a decision, creates bad customer messaging, or speeds a bad choice, the business owns the result. The board can't treat that as a technical glitch.

In practice, AI now behaves like a new decision layer inside the company. That makes oversight a governance issue.

Boards are accountable even when AI enters through vendors

Many companies don't "build AI." They buy software that already includes it. A SaaS platform adds an assistant. A vendor automates reviews. A service provider uses AI to make recommendations or draft communications. Suddenly, AI sits inside important workflows.

That is why vendor dependence matters. Outsourcing the tool does not outsource the decision, the risk, or the public consequence. If you need a clearer frame for setting those boundaries, this guide on technology risk appetite for boards helps turn fuzzy concern into actual oversight.

Where AI governance usually breaks down inside companies

The failure is rarely a lack of smart people. More often, the structure is weak. AI adoption moves fast, while ownership, policy, and reporting stay scattered. Then leadership assumes someone else has it covered.

No one owns the full picture

IT may review tools. Security may review access and data exposure. Legal may review terms, privacy, and claims. Product may push adoption for speed. HR may approve hiring use. Operations may automate workflows.

Each group sees part of the issue. Few see the enterprise view.

That creates a familiar problem. Everyone is involved, but no one is clearly accountable for where AI is in use, which use cases are material, what controls apply, and when the board should hear about exceptions. If ownership still feels vague in your environment, strong board cyber governance practices offer a useful model for how leadership can structure oversight without sliding into management detail.

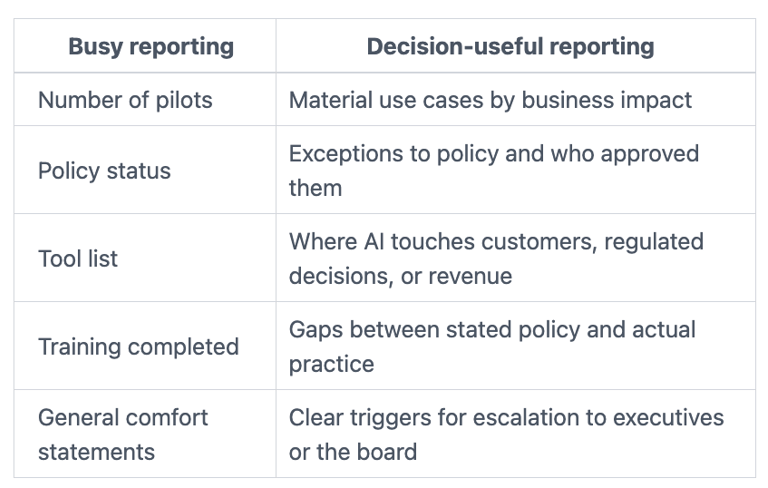

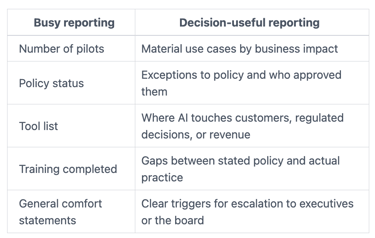

Boards get activity updates instead of decision-useful reporting

Many boards hear that a policy exists, a pilot launched, or a working group meets monthly. That sounds active. It doesn't tell you whether oversight is real.

This contrast is usually the problem:

The takeaway is simple. Activity is not oversight. If reporting doesn't show owners, risks, controls, exceptions, and thresholds, it won't help you govern. Stronger board reporting for cybersecurity programs points to the same discipline: translate technical motion into business decisions.

What good AI governance for boards looks like in practice

Good governance is not heavy process. It is a clean operating picture. You need to know who owns AI at the executive level, which uses are low-risk, which require review, and what reaches the board.

Clear ownership, clear rules, and clear escalation paths

Start with one named executive owner. That person does not have to run every use case. They do have to own the enterprise view, coordinate across functions, and bring material issues forward.

Next, define use boundaries. Low-risk drafting support is not the same as AI that affects customer outcomes, financial reporting, hiring, safety, regulated decisions, or core operations. Those uses need review before broad adoption.

Then set escalation rules. If an AI use case could create customer harm, legal exposure, major operational disruption, or brand damage, leadership should know when it rises to the CEO, the committee chair, or the full board. The same logic used in board incident response oversight applies here: pre-decide who gets pulled in, when, and based on what threshold.

Good governance does not slow useful AI. It separates safe experimentation from material exposure.

Oversight that balances speed with control

You do not need to treat every AI use case like a crisis. That would freeze adoption and push use underground. Instead, separate low-risk experimentation from higher-stakes use.

A practical model is tiered. Low-risk internal productivity tools can move fast under policy. Medium-risk uses need management review. High-risk uses, those touching customers, regulated decisions, sensitive data, or core operations, need formal sign-off and regular reporting.

This is where AI governance for boards becomes useful rather than performative. You are not blocking innovation. You are making risk legible. That often helps the company move faster because teams know the rules before the stakes rise.

The questions boards and CEOs should ask right now

If you want to know whether your oversight is real, ask for plain answers to plain questions.

Questions that reveal whether AI oversight is real or performative

Where is AI already in use today, including vendor tools and embedded features?

Which uses affect customers, hiring, regulated decisions, financial reporting, or critical operations?

Who approves higher-risk use cases, by name and role?

What data can approved tools access, retain, or send outside the company?

What reporting reaches executive leadership and the board, and how often?

What incidents, near misses, complaints, or policy exceptions have already occurred?

Where does practice differ from policy right now?

If you need sharper prompts for committee use, these cyber risk questions audit committees should ask show how simple questions expose weak ownership fast.

The first governance moves if your oversight is still immature

Begin with an inventory of material AI use, not a perfect catalog of everything. Then assign executive ownership. After that, define three use-case tiers, set reporting expectations, and make clear when legal, risk, security, HR, product, or operations must be involved.

You can do this in weeks, not quarters, if you stay focused on decision rights and reporting. The first goal is not maturity theater. The first goal is to stop flying blind.

Common questions leaders still ask about AI governance

Does every company need formal board oversight of AI?

Every company needs leadership clarity. Not every company needs the full board reviewing every AI use case. The test is materiality. If AI affects strategy, trust, legal exposure, customer outcomes, or core operations, then it needs board-level visibility.

Is AI governance just a legal or compliance issue?

No. Compliance matters, but it is only one part of the picture. You also need to govern decision quality, operational reliability, customer harm, brand trust, and recovery when something goes wrong.

Will stronger AI governance slow adoption?

Weak governance slows adoption more. It creates rework, surprise, and last-minute escalation. Clear rules usually speed good use because teams know what is allowed, what needs review, and who decides.

AI won't stay in the server room. It is already shaping decisions your customers, employees, regulators, and investors will judge. That is why ai governance for boards is now a leadership issue.

Before your next board cycle, pressure-test three things: who owns the full picture, what reporting reaches leadership, and when AI issues escalate. If any of those answers are fuzzy, your risk is already ahead of your governance.

Tyson Martin advises boards, CEOs, and executive teams on cyber risk, AI oversight, technology governance, and decision-making under pressure.