AI Policy Template for Boards That Holds Up Under Pressure

Use this AI Policy Template for Boards to set scope, ownership, review, and escalation so you can govern AI risk before pressure exposes gaps.

An AI Policy Template for Boards is a governance tool. You use it to set decision rights, define acceptable use, require accountability, and reduce avoidable surprises as AI spreads through products, operations, vendors, and internal workflows.

That matters now because your board may be asked to oversee systems it did not design and may not fully understand, yet could still be held responsible for. If you are a director, CEO, founder, or executive leader, you need a practical starting point, not theory. Strong AI oversight also needs to connect with broader board cyber governance best practices, because the failures often look familiar, weak reporting, fuzzy ownership, and late escalation.

Key takeaways you can use before the next board meeting

Define where the policy applies, including AI you build, buy, embed, or allow employees to use.

Name owners and decision rights, so approval and risk acceptance do not drift by default.

Classify AI use cases by business impact and risk, not by technical complexity alone.

Require review, testing, documentation, and human oversight before material use goes live.

Set reporting expectations for the board, including exceptions, incidents, vendor issues, and trend changes.

Create clear escalation paths, so management knows when an AI issue becomes a board issue.

What an AI policy for boards is really supposed to do

Your board-level AI policy should help leadership govern decisions. It should not tell data scientists how to tune a model or tell staff which prompt to write. You need a policy that sets guardrails for use, accountability, ethics, review, and oversight.

That distinction matters because boards often get dragged into either vague principle talk or technical detail. Neither helps you govern. A good policy gives management room to execute while giving the board a basis for challenge, approval, and escalation.

It also gives you a cleaner reporting structure. If you want the board to make sound calls, management needs to present AI use in business terms, similar to board reporting tied to business impact.

Set the guardrails, not the day-to-day controls

Your role is to define principles, risk tolerance, review thresholds, and escalation triggers. Management owns implementation, monitoring, and operating controls.

That split needs to be explicit. Shared ownership sounds cooperative, but under pressure it often means nobody decides. Your policy should state who approves use, who accepts risk, who can pause deployment, and when issues move up.

Cover the AI you build, buy, and quietly adopt

Your policy should cover internal tools, third-party platforms, embedded AI features, customer-facing systems, and employee use of outside tools. Many companies govern the visible use cases and miss the rest.

That creates blind spots. A vendor may add AI to software you already rely on. Staff may upload sensitive data into public tools. Product teams may launch an AI feature before anyone assesses harm, bias, privacy, or explainability. If the policy does not cover shadow AI and vendor dependence, it does not cover your real exposure.

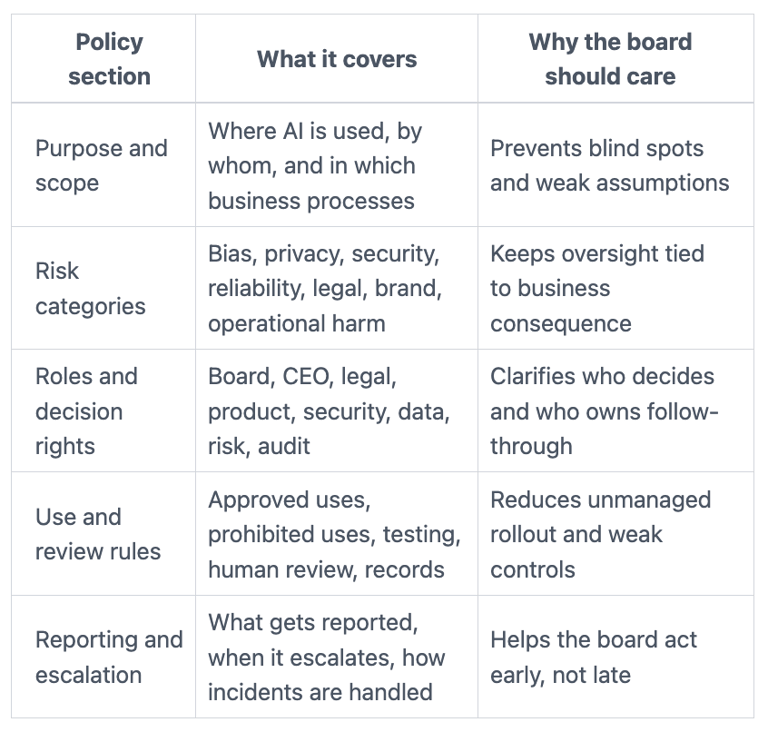

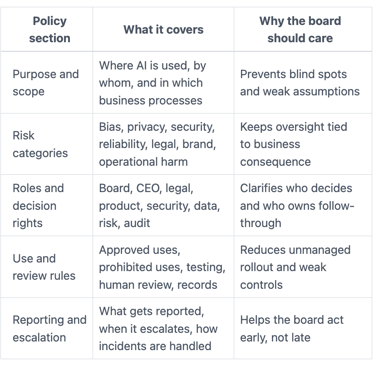

The core sections every AI Policy Template for Boards should include

A usable policy helps leadership make decisions, assign responsibility, and respond when something goes wrong. The structure can stay simple, as long as it answers the right questions.

This table gives you a practical frame:

The value of the template is not length. The value is clarity.

Purpose, scope, and the risks the board is overseeing

Start with why the company uses AI and where it is in play. Then define what falls inside the policy. Include systems that shape customer outcomes, employee decisions, pricing, hiring, fraud controls, content generation, analytics, and vendor-supported automation.

Next, name the risk categories management must address. Keep the list short and practical: bias, privacy, security, reliability, legal exposure, brand harm, and operational disruption. Those categories give the board a common language for review.

Roles, decision rights, and who owns what

Your template should identify owners across the board, CEO, executive team, legal, security, data, product, risk, and internal audit when relevant. Unclear ownership is one of the fastest ways governance fails.

Board-level thresholds matter here. You need to state what management can approve, what must be escalated, and what level of uncertainty exceeds acceptable tolerance. That discipline lines up with how boards set technology risk appetite.

Rules for use, review, testing, and approval

Your policy should require approved use cases, prohibited uses, human review where warranted, testing before launch, change control, documentation, and periodic reassessment. Keep this at the oversight level.

You do not need a board policy that reads like a technical manual. You need one that says material AI use cannot go live without review, cannot change without control, and cannot remain unexamined after launch.

Reporting, escalation, and incident response expectations

Management should report material AI use cases, risk reviews, failed controls, vendor issues, customer impact, and incidents. The board also needs thresholds for escalation.

For example, escalation may trigger when an AI use case affects regulated data, materially impacts customers, creates legal exposure, or produces harm at scale. If an AI failure becomes a business event, your response expectations should align with board incident response oversight.

If you cannot tell what would trigger escalation, your policy will fail when speed matters most.

Where AI oversight breaks down, even when a policy exists

Many policies look complete on paper and still fail in practice. The failure usually sits in governance routines, reporting quality, or decision rights.

The policy is broad, but no one can make a decision from it

A policy full of ethics language can sound reassuring while leaving leaders without a usable framework. If it says AI should be fair, safe, and responsible, but never defines approval thresholds, review triggers, or who can stop deployment, it does not help you decide.

Ambiguity gets expensive during fast decisions. It slows launches, weakens challenge, and creates confusion during incidents or public scrutiny.

AI oversight gets trapped in legal, IT, or a single vendor relationship

Siloed ownership creates blind spots. Legal may focus on terms. IT may focus on systems. Product may focus on speed. Vendors may shape your exposure more than your own staff does.

Your board can miss the real risk if nobody connects AI use to customer harm, cyber exposure, operational dependence, and trust. That is where independent judgment from a board-level cyber risk advisor can sharpen thresholds and escalation.

What good board-level AI governance looks like in practice

When the policy works, you can see it. Oversight becomes calmer, reporting becomes clearer, and decisions arrive faster.

You can see which AI uses matter most and who is accountable

Management keeps a current inventory of material AI use cases. Risks are tiered by impact. Owners are named. Approvals and exceptions are documented.

That improves defensibility. It also reduces surprises. You stop treating AI as a vague innovation topic and start governing it as a set of choices with known owners and known thresholds.

The board gets trend-based reporting, not scattered updates

Useful reporting shows where AI is being used, what changed, where exceptions sit, what incidents occurred, and which decisions need attention. It also shows whether governance is getting stronger or weaker over time.

That reporting style builds confidence because it supports real discussion. It moves the room away from compliance theater and toward leading board conversations that inspire confidence.

Questions boards and executive teams should ask before approving the policy

A strong policy should survive challenge. These questions help you test whether it will.

What decisions are we trying to govern, and which ones are too risky to leave unclear?

Focus on materiality first. Which AI uses could affect customers, revenue, legal exposure, safety, or trust? Which uses need pre-approval? Which uses are prohibited? Which changes require re-review?

Many of the same habits used in cyber oversight apply here. A short list of cyber risk questions audit committees should ask can also improve your AI governance challenge.

How will we know this policy is working six months from now?

Ask for measurable signs. You should expect better inventory visibility, fewer unreviewed deployments, clearer ownership, better reporting, and faster escalation when issues appear.

If management cannot describe how progress will be measured, the policy may signal good intent without changing practice.

A simple next move, adapt the template, assign owners, and test it

Your best first step is not writing a perfect policy. It is adapting a clear template, confirming ownership, setting review thresholds, and testing the policy against a real use case.

Use the next board cycle to pressure-test one AI vendor, one internal use case, or one customer-facing feature. Review who approves it, what risks apply, what gets reported, and what would trigger escalation. That exercise will show you where language is clear and where it still hides uncertainty.

FAQ: what leaders still ask about AI policy templates for boards

Does the board need a separate AI policy?

Often, yes. You can align it with existing technology, cyber, data, and risk policies, but AI introduces decision points that deserve direct board attention.

How often should the policy be reviewed?

At least annually. Review it sooner after a material AI deployment, incident, acquisition, major vendor change, or legal shift.

Who should own the policy in management?

Usually the CEO sponsors it, with named operating owners across legal, product, data, security, and risk. One function alone should not carry it.

Should the policy apply to third-party AI tools?

Yes. Vendor-provided AI can create your risk even when the model is not yours.

How does AI policy connect to cyber and data governance?

They overlap heavily. Privacy, access, vendor risk, model integrity, incident handling, and board reporting should work as one governance picture.

Sources referenced: NIST AI Risk Management Framework 1.0, OECD AI Principles, SEC cybersecurity disclosure rules for governance context.

About the author: Tyson Martin advises boards and CEOs on cyber risk, AI oversight, governance, and operational readiness. He helps leadership teams make clearer, faster, and more defensible decisions when technology risk becomes a board issue.

A strong AI Policy Template for Boards helps you make clearer, faster, and more defensible decisions when AI creates risk, uncertainty, or public scrutiny. That is the point.

Before your next meeting, review your current policy or draft and ask a simple question. Does it clarify scope, ownership, reporting, and escalation, or does it only signal good intent?

Providing plain-English technology oversight to help Boards and CEOs lead with confidence and make defensible risk decisions.

© 2026. All rights reserved.

Navigation

Free Resources

Contact

Stay ahead of your next board agenda

Sign up for Reports & Learnings From the Boardroom. Plain-English AI and cyber governance insights, biweekly. No pitch.

No spam. Unsubscribe anytime. · Or download the Director's AI Question Pack — 25 questions free